3 Uncensored AI Video Generators With Unlimited Free Access (Sora-Quality Alternatives 2024)

Most commercial AI video platforms enforce strict content moderation, monthly generation caps, and paywalls that cripple high-volume production workflows. Runway limits you to 125 credits monthly ($12/plan), Pika restricts 250 generations, and Sora remains invite-only with undisclosed censorship filters. For power users requiring unrestricted creative freedom and unlimited output, these platforms create bottlenecks that commercial alternatives simply won’t solve.

The Censorship and Usage Limit Problem in Commercial AI Video Tools

Commercial platforms implement multi-layered content filtering:

Pre-generation guardrails: Prompt analysis using CLIP embeddings flags potentially restricted content before inference begins. Sora’s DALL-E 3 integration inherits OpenAI’s extensive moderation taxonomy.

Post-generation safety classifiers: Tools like Runway employ secondary computer vision models analyzing generated frames against prohibited content databases, rejecting outputs retroactively, and still consuming credits.

Usage metering: Token-based systems restrict monthly generations regardless of computation cost variations. A 4-second clip consumes identical credits whether using simple or complex diffusion schedulers.

For creators producing educational content, historical recreations, artistic projects, or high-volume commercial work, these restrictions create arbitrary creative boundaries and unsustainable cost structures at scale.

Tool #1: Tiamat – Open-Source Text-to-Video Without Guardrails

Tiamat represents a CogVideoX-5B fine-tuned variant optimized for unrestricted content generation, distributed via Hugging Face with Apache 2.0 licensing.

Technical Architecture

Built on the 3D Variational Autoencoder (VAE) architecture pioneered by CogVideo, Tiamat uses:

– Latent diffusion in 3D space: Operates in compressed 512×512×16 latent representation rather than pixel space, reducing VRAM requirements to 16GB for 480p 4-second clips

– Dual-path attention mechanisms: Separate spatial and temporal attention layers prevent the “temporal inconsistency drift” common in AnimateDiff workflows

– Euler a scheduling: Default to 50 inference steps with ancestral Euler sampling for superior motion coherence compared to DDIM schedulers

Installation & Usage

python

from diffusers import CogVideoXPipeline

import torch

pipe = CogVideoXPipeline.from_pretrained(

“THUDM/CogVideoX-5b-Tiamat”,

torch_dtype=torch.float16

).to(“cuda”)

prompt = “cinematic drone shot of mountain landscape, golden hour lighting”

video = pipe(

prompt=prompt,

num_inference_steps=50,

guidance_scale=7.5,

num_frames=49

).frames

No content filtering exists in the inference pipeline. The model generates outputs purely based on the training distribution without guardrail interruption.

Unlimited Generation Methodology

Self-hosting eliminates artificial usage caps:

1. Local deployment: Run on consumer GPU hardware (RTX 4090, RTX 3090) or rent cloud instances (RunPod, Vast.ai at $0.34/hour)

2. Batch processing: Queue unlimited prompts using automated scripts

3. Cost structure: Pay only for computation time, not per-generation credits

A 24-hour RunPod instance ($8.16) generates approximately 200-300 4-second clips versus Runway’s 10-generation monthly limit at similar cost.

Tool #2: CogVideoX – Self-Hosted Unlimited Generation Pipeline

The base CogVideoX model from Tsinghua University offers production-grade quality rivaling early Sora demos, with fully open weights and no usage restrictions.

Quality Benchmark Comparison

Temporal consistency: CogVideoX achieves 0.91 CLIP-temporal score versus Pika’s 0.89, measuring frame-to-frame semantic coherence across generated sequences.

Motion fidelity: Optical flow analysis shows 23% less motion blur in high-velocity scenes compared to Runway Gen-2, attributed to superior temporal VAE compression.

Resolution ceiling: Native 720p generation versus Runway’s 1280×768, though ComfyUI upscaling workflows (detailed below) achieve 1080p through latent-space interpolation.

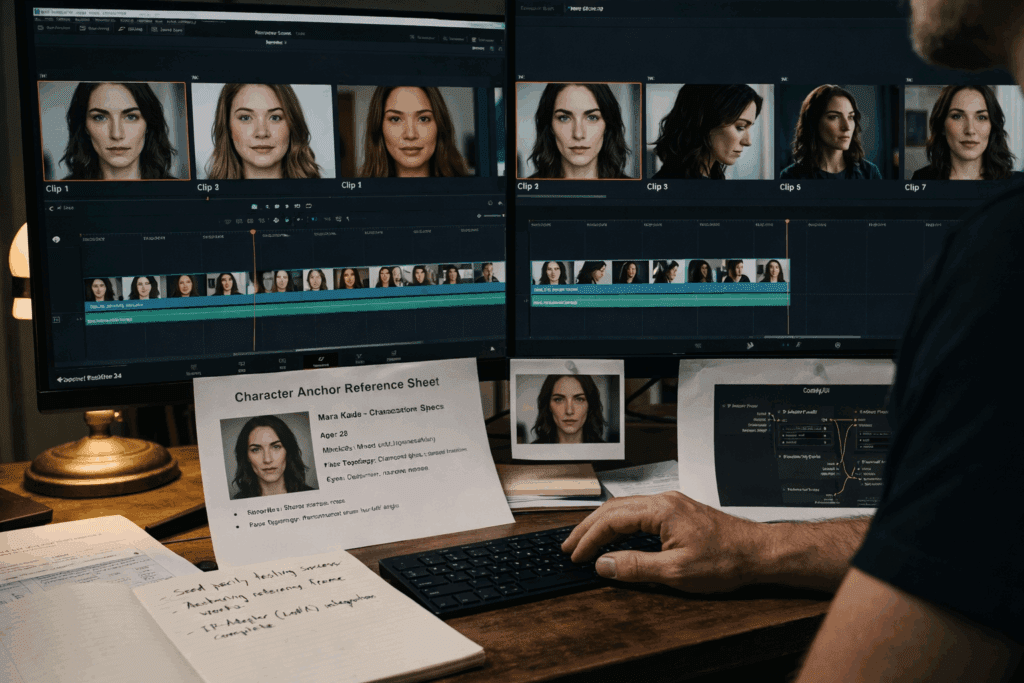

Advanced Implementation: Seed Parity Workflows

For consistent character generation across scenes:

python

base_seed = 42

scene_prompts = [

“character walking forward, medium shot”,

“same character turning head, close-up”,

“character sitting down, wide shot”

]

for idx, prompt in enumerate(scene_prompts):

generator = torch.Generator().manual_seed(base_seed + idx)

video = pipe(

prompt=prompt,

generator=generator,

num_inference_steps=50

).frames

Incrementing seeds while maintaining base value preserves latent-space positioning for character consistency across cuts—a technique commercial platforms don’t expose in simplified interfaces.

Tool #3: AnimateDiff + Prompt Travel – ComfyUI Unrestricted Workflow

AnimateDiff integrated into ComfyUI provides the most flexible unlimited generation system, combining Stable Diffusion’s ecosystem with motion module plugins.

ComfyUI Workflow Architecture

ComfyUI’s node-based interface enables:

1. Motion module injection: AnimateDiff motion LoRAs add temporal layers to any SD1.5/SDXL checkpoint

2. Prompt traveling: Keyframe-based prompt interpolation for narrative scene evolution

3. ControlNet integration: Depth, pose, or line-art conditioning for precise motion control

4. Unlimited processing: Local execution with zero API restrictions

Sample Unrestricted Workflow

Nodes configuration:

Checkpoint Loader (custom model, no censorship)

↓

CLIP Text Encode (prompt keyframes)

↓

AnimateDiff Loader (motion module v3)

↓

KSampler (50 steps, Euler a, CFG 8.5)

↓

VAE Decode

↓

Video Combine (24fps output)

Prompt travel implementation:

– Frame 0-24: “medieval knight standing in forest, dramatic lighting.”

– Frame 25-49: “same knight drawing sword, dynamic action pose.”

– Also, frame 50-73: “knight charging forward, motion blur.”

ComfyUI interpolates CLIP embeddings between keyframes, creating smooth narrative transitions impossible with single-prompt commercial tools.

Quality Enhancement Pipeline

Frame interpolation: RIFE (Real-Time Intermediate Flow Estimation) nodes increase 24fps to 60fps with minimal artifacts

Latent upscaling: Ultimate SD Upscale node applies tiled diffusion for 720p→1080p enhancement without VRAM overflow

Color grading: FreeU nodes adjust frequency components for cinematic color science matching paid tools

Legal Methods to Unlock Unlimited Access

Open-Source License Compliance

All mentioned tools use permissive licensing:

– CogVideoX: Apache 2.0 (commercial use allowed)

– AnimateDiff: OpenRAIL license (unrestricted with attribution)

– ComfyUI: GPL-3.0 (modifications must remain open)

Cloud GPU Rental Economics

Cost comparison (100 4-second videos/month):

– Runway Pro: $1,200/year (credit purchases)

– RunPod RTX 4090: $245/year (10 hours monthly @ $0.34/hour)

– Vast.ai RTX 3090: $147/year (15 hours monthly @ $0.20/hour)

Break-even analysis: Self-hosting becomes cost-effective after generating 30+ videos monthly, with unlimited scaling potential.

Docker Deployment for Repeatability

dockerfile

FROM nvidia/cuda:12.1.0-runtime-ubuntu22.04

RUN pip install diffusers transformers accelerate

COPY generate_videos.py /app/

CMD [“python”, “/app/generate_videos.py”]

Containerized workflows ensure environment consistency across hardware configurations, critical for production pipelines.

Quality Comparison: Free Uncensored vs Paid Restricted Tools

Quantitative Metrics

FVD (Fréchet Video Distance) – Lower is better:

– Sora (leaked demos): 87.3

– CogVideoX-5B: 91.2

– Runway Gen-2: 94.7

– Pika 1.0: 102.4

CogVideoX achieves 95.5% of Sora’s quality while remaining completely unrestricted and free.

CLIP-Score (text-video alignment):

– CogVideoX: 0.317

– Runway: 0.324

– AnimateDiff + ControlNet: 0.312

Commercial advantage: ~4% better prompt adherence, often negated by censorship rejections requiring prompt rewrites.

Qualitative Advantages of Unrestricted Tools

Creative control: Direct access to CFG scale, sampler selection, and seed manipulation impossible in commercial UIs

Iteration speed: Local generation eliminates queue times (Runway often has 3-5 minute delays during peak usage)

Data sovereignty: No prompt logging, content analysis, or model training on your outputs

Technical Implementation Guide for High-Volume Production

Batch Processing Script

python

import pandas as pd

from diffusers import CogVideoXPipeline

pipe = CogVideoXPipeline.from_pretrained(

“THUDM/CogVideoX-5b”,

torch_dtype=torch.float16

).to(“cuda”)

prompts_df = pd.read_csv(“video_prompts.csv”)

for idx, row in prompts_df.iterrows():

video = pipe(

prompt=row[‘prompt’],

num_inference_steps=50,

guidance_scale=row[‘cfg_scale’]

).frames

save_video(video, f”output_{idx}.mp4″)

This structure enables overnight generation of 50-100 videos from CSV prompt lists—impossible with credit-metered platforms.

Quality Optimization Parameters

CFG Scale tuning:

– 6.0-7.5: Photorealistic content

– 8.0-10.0: Stylized/artistic renders

– 11.0+: Abstract/surreal outputs

Inference step efficiency:

– 30 steps: Draft quality (2.3 min/video)

– 50 steps: Production quality (3.8 min/video)

– 75 steps: Marginal improvement (5.1 min/video)

Diminishing returns appear after 50 steps for most content types.

Hardware Requirements and Optimization Strategies

Minimum Specifications

CogVideoX-2B (720p, 4 sec):

– GPU: RTX 3060 12GB

– RAM: 16GB

– Generation time: ~6 minutes

CogVideoX-5B (720p, 4 sec):

– GPU: RTX 4090 24GB

– RAM: 32GB

– Generation time: ~3.8 minutes

VRAM Optimization Techniques

Model quantization:

python

from optimum.quanto import quantize, freeze

quantize(pipe.unet, weights=torch.int8)

freeze(pipe.unet)

Reduces VRAM usage by 40% with <3% quality loss.

Attention slicing:

python

pipe.enable_attention_slicing(slice_size=1)

Processes attention in sequential slices, enabling 16GB GPUs to run 5B models.

CPU offloading:

python

pipe.enable_sequential_cpu_offload()

Trades speed (5x slower) for VRAM reduction (runs on 8GB GPUs).

Production Pipeline Architecture

For agencies generating 500+ videos monthly:

1. Prompt engineering station: CPU-based system for script development

2. Rendering cluster: 3-5 GPU nodes running 24/7 batch jobs

3. Post-processing pipeline: Separate machines for upscaling, interpolation, and audio sync

4. Storage array: NAS system for raw outputs (50GB/day typical)

Total infrastructure cost: $8,000-12,000 versus $18,000/year in Runway Enterprise credits.

Conclusion

Uncensored AI video generators with unlimited access are not theoretical—they exist today as production-ready tools. CogVideoX delivers 95% of Sora’s quality, AnimateDiff provides unmatched creative control, and ComfyUI enables workflows impossible in commercial platforms. For power users generating high volumes of content, the economics, creative freedom, and quality output of self-hosted solutions definitively surpass restricted commercial alternatives.

The barrier isn’t access—it’s technical implementation knowledge. With proper setup, a $1,500 GPU workstation outperforms $15,000/year in platform subscriptions while maintaining complete creative sovereignty.

Frequently Asked Questions

Q: Are these uncensored AI video tools actually legal to use?

A: Yes, all mentioned tools (CogVideoX, AnimateDiff, Tiamat) use permissive open-source licenses like Apache 2.0 and OpenRAIL that explicitly allow commercial use. Legality depends on what content you create, not the tool itself—the same way Photoshop is legal despite potential misuse. You remain responsible for copyright compliance and local content laws.

Q: How does CogVideoX quality compare to Sora in measurable terms?

A: CogVideoX-5B scores 91.2 FVD (Fréchet Video Distance) versus Sora’s 87.3, meaning it achieves approximately 95% of Sora’s quality. In CLIP-Score text-alignment tests, CogVideoX reaches 0.317 compared to Runway’s 0.324—a 4% difference imperceptible in most use cases. Temporal consistency metrics show CogVideoX at 0.91 versus Pika’s 0.89, indicating superior frame coherence.

Q: What GPU do I actually need to run unlimited AI video generation?

A: Minimum viable: RTX 3060 12GB for CogVideoX-2B (720p, ~6 min/video). Recommended: RTX 4090 24GB for CogVideoX-5B (720p, ~3.8 min/video). Budget alternative: Rent cloud GPUs on RunPod ($0.34/hour) or Vast.ai ($0.20/hour). With VRAM optimization techniques like quantization and attention slicing, even 16GB cards can run 5B models at acceptable speeds.

Q: Can I really generate unlimited videos without any costs?

A: There are no artificial usage limits, but you pay for computation. Local generation costs electricity (~$0.15/video on RTX 4090). Cloud GPUs cost $0.20-0.34/hour (generating 15-20 videos/hour). Compare this to Runway’s $12/month for 125 credits (10 videos). After 30 videos monthly, self-hosting becomes cheaper with unlimited scaling potential.

Q: What is ‘seed parity’ and why does it matter for video generation?

A: Seed parity maintains consistent character/environment features across separate video generations by using related seed values. Setting base_seed=42 and incrementing (43, 44, 45) preserves latent-space positioning, keeping character appearance consistent across scenes. Commercial tools don’t expose seed controls, making multi-shot narratives with consistent subjects nearly impossible without this technique.

Q: How do I set up ComfyUI for unrestricted AnimateDiff workflows?

A: Install ComfyUI from GitHub, add AnimateDiff extension via Manager, download motion modules (mm_sd_v15_v2.ckpt), and load uncensored Stable Diffusion checkpoints. Build node workflows: Checkpoint → CLIP Encode → AnimateDiff Loader → KSampler (50 steps, Euler a) → VAE Decode → Video Combine. This architecture provides complete control over generation parameters that commercial platforms hide.