VidRemake · Remake

Powered exclusively by: Sora 2 (OpenAI)

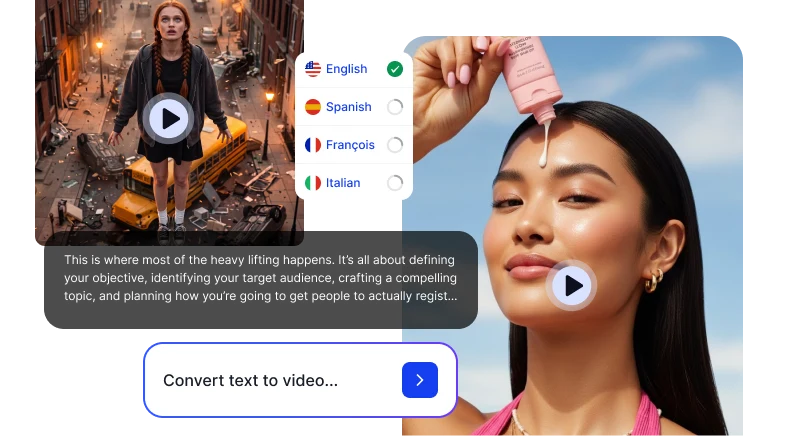

Upload a Viral Video. Make It Yours.

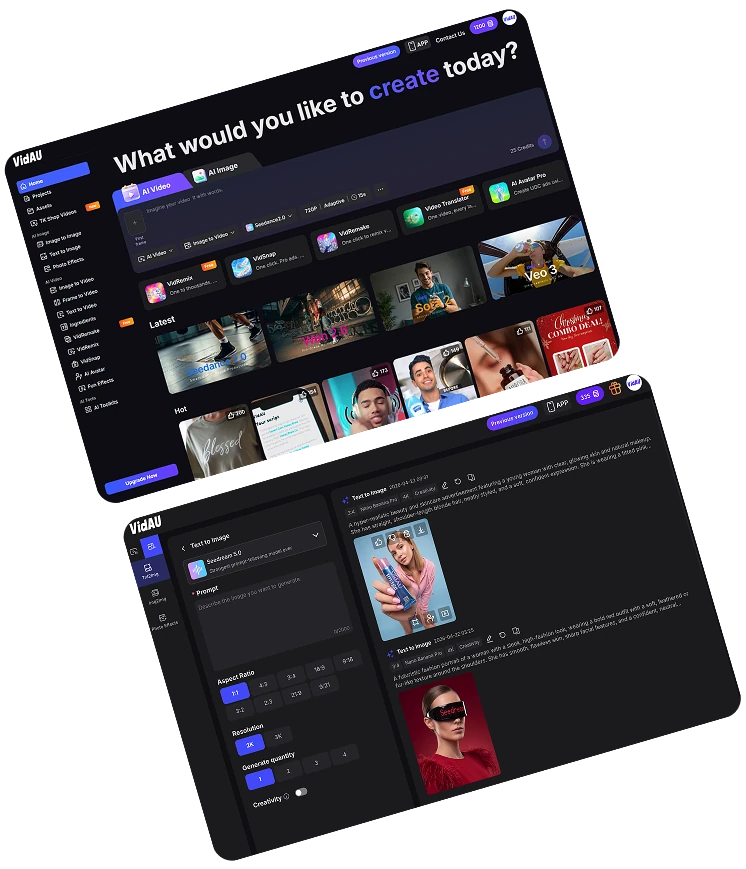

Powered by VidAU, Veo 3, Seedance & More

Extraordinary Awards

Best AI Tool for Ad Creatives

Product Hunt

#1 Product of the Month

$350,000

Google Cloud Startup Program Grant

Trusted by brands and creators worldwide

Awards: Best AI Tool for Ad Creative, Extraordinary Awards 2025; #1 Product of the Month, Product Hunt, September 2025; Google Cloud Startup Program Grant, $350,000

Powered exclusively by: Sora 2 (OpenAI)

Powered by VidAU

Powered by: Veo 3 · Nano Banana · Sora 2

Powered by: Veo 3 · Sora 2 · Seedance 1.0 · Hailuo 2.3 · Kling 2.5 · Vidu AI · Wan AI · PixVerse V5 · VidAU

Powered by: Veo 3 · Nano Banana · Sora 2

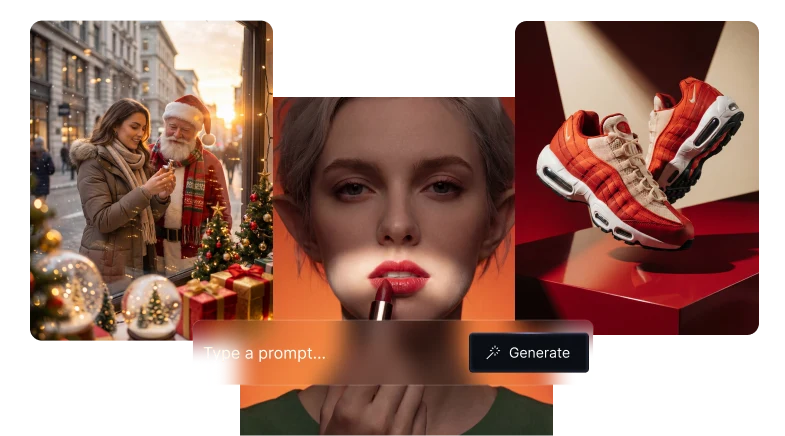

Powered by: Nano Banana 2 · Nano Banana Pro · Nano Banana · VidAU Image V1.5

Powered by: Veo 3 · Sora 2 · Seedance 1.0 · Hailuo 2.3 · Kling 2.5 · Vidu AI · Wan AI · PixVerse V5 · VidAU

Google DeepMind · Cinematic Realism · Native Audio

OpenAI · Narrative Structure · Viral Cloning

ByteDance · Product Precision · Smooth Motion

MiniMax · Long-Form · Budget Performance

Kuaishou · Object Accuracy · Human Motion

Aesthetic Range · Consistent Characters

Fluid Motion · Lifestyle Content · Natural scenes

Bold Creative · Multi-Style

Proprietary · Ad Performance · Commercial Optimised

Google DeepMind · Cinematic Realism · Native Audio

OpenAI · Narrative Structure · Viral Cloning

ByteDance · Product Precision · Smooth Motion

MiniMax · Long-Form · Budget Performance

Kuaishou · Object Accuracy · Human Motion

Aesthetic Range · Consistent Characters

Fluid Motion · Lifestyle Content · Natural scenes

Bold Creative · Multi-Style

Proprietary · Ad Performance · Commercial Optimised

Google DeepMind · Cinematic Realism · Native Audio

OpenAI · Narrative Structure · Viral Cloning

ByteDance · Product Precision · Smooth Motion

MiniMax · Long-Form · Budget Performance

Kuaishou · Object Accuracy · Human Motion

Aesthetic Range · Consistent Characters

Fluid Motion · Lifestyle Content · Natural scenes

Bold Creative · Multi-Style

Proprietary · Ad Performance · Commercial Optimised

Google DeepMind · Cinematic Realism · Native Audio

OpenAI · Narrative Structure · Viral Cloning

ByteDance · Product Precision · Smooth Motion

MiniMax · Long-Form · Budget Performance

Kuaishou · Object Accuracy · Human Motion

Aesthetic Range · Consistent Characters

Fluid Motion · Lifestyle Content · Natural scenes

Bold Creative · Multi-Style

Proprietary · Ad Performance · Commercial Optimised

Google DeepMind · Cinematic Realism · Native Audio

OpenAI · Narrative Structure · Viral Cloning

ByteDance · Product Precision · Smooth Motion

MiniMax · Long-Form · Budget Performance

Kuaishou · Object Accuracy · Human Motion

Aesthetic Range · Consistent Characters

Fluid Motion · Lifestyle Content · Natural scenes

Bold Creative · Multi-Style

Proprietary · Ad Performance · Commercial Optimised

Google DeepMind · Cinematic Realism · Native Audio

OpenAI · Narrative Structure · Viral Cloning

ByteDance · Product Precision · Smooth Motion

MiniMax · Long-Form · Budget Performance

Kuaishou · Object Accuracy · Human Motion

Aesthetic Range · Consistent Characters

Fluid Motion · Lifestyle Content · Natural scenes

Bold Creative · Multi-Style

Proprietary · Ad Performance · Commercial Optimised

Google DeepMind · Cinematic Realism · Native Audio

OpenAI · Narrative Structure · Viral Cloning

ByteDance · Product Precision · Smooth Motion

MiniMax · Long-Form · Budget Performance

Kuaishou · Object Accuracy · Human Motion

Aesthetic Range · Consistent Characters

Fluid Motion · Lifestyle Content · Natural scenes

Bold Creative · Multi-Style

Proprietary · Ad Performance · Commercial Optimised

Google DeepMind · Cinematic Realism · Native Audio

OpenAI · Narrative Structure · Viral Cloning

ByteDance · Product Precision · Smooth Motion

MiniMax · Long-Form · Budget Performance

Kuaishou · Object Accuracy · Human Motion

Aesthetic Range · Consistent Characters

Fluid Motion · Lifestyle Content · Natural scenes

Bold Creative · Multi-Style

Proprietary · Ad Performance · Commercial Optimised

Google DeepMind · Cinematic Realism · Native Audio

OpenAI · Narrative Structure · Viral Cloning

ByteDance · Product Precision · Smooth Motion

MiniMax · Long-Form · Budget Performance

Kuaishou · Object Accuracy · Human Motion

Aesthetic Range · Consistent Characters

Fluid Motion · Lifestyle Content · Natural scenes

Bold Creative · Multi-Style

Proprietary · Ad Performance · Commercial Optimised

ByteDance · Deep Thinking · Real-Time Search

Maximum Prompt Accuracy

Proprietary · Commercial Campaigns · Ad-Ready

ByteDance · Deep Thinking · Real-Time Search

Maximum Prompt Accuracy

Proprietary · Commercial Campaigns · Ad-Ready

ByteDance · Deep Thinking · Real-Time Search

Maximum Prompt Accuracy

Proprietary · Commercial Campaigns · Ad-Ready

ByteDance · Deep Thinking · Real-Time Search

Maximum Prompt Accuracy

Proprietary · Commercial Campaigns · Ad-Ready

ByteDance · Deep Thinking · Real-Time Search

Maximum Prompt Accuracy

Proprietary · Commercial Campaigns · Ad-Ready

ByteDance · Deep Thinking · Real-Time Search

Maximum Prompt Accuracy

Proprietary · Commercial Campaigns · Ad-Ready

ByteDance · Deep Thinking · Real-Time Search

Maximum Prompt Accuracy

Proprietary · Commercial Campaigns · Ad-Ready

ByteDance · Deep Thinking · Real-Time Search

Maximum Prompt Accuracy

Proprietary · Commercial Campaigns · Ad-Ready

ByteDance · Deep Thinking · Real-Time Search

Maximum Prompt Accuracy

Proprietary · Commercial Campaigns · Ad-Ready

Free to start · No editing skills needed · Commercial-ready output · Cancel anytime