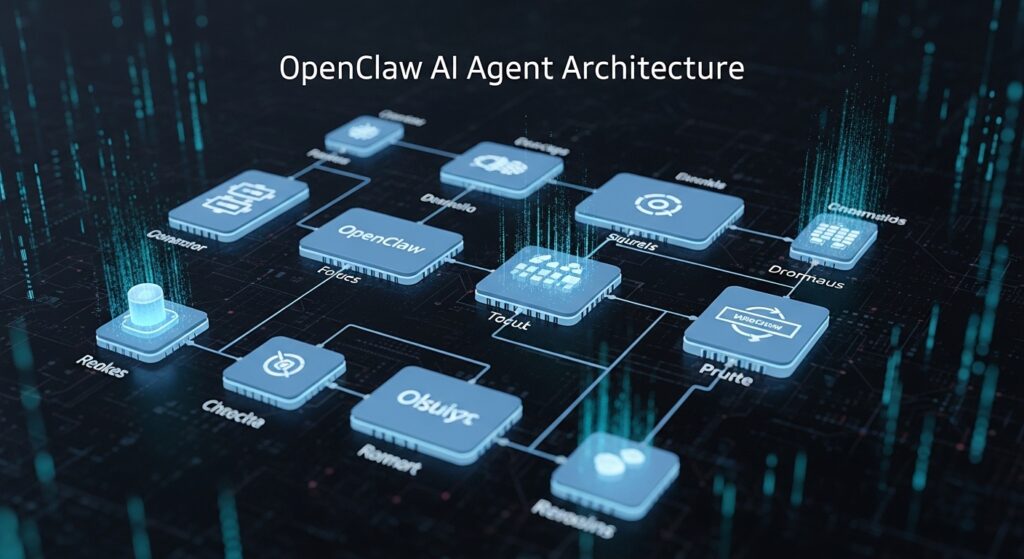

Don’t pay for OpenClaw – these free tools do the same (or better)

Google Antigravity: Enterprise-Grade AI Agents at Zero Cost

While OpenClaw commands premium pricing for its orchestration capabilities, Google Antigravity* delivers comparable—often superior—agentic AI workflows without licensing fees. Built on Google’s Vertex AI infrastructure, Antigravity provides natural language task decomposition, multi-step reasoning chains, and automatic tool selection through its *Agent Builder interface.

Core Advantages Over OpenClaw

Zero-Shot Function Calling: Antigravity’s Gemini 1.5 Pro integration enables dynamic API selection without fine-tuning. Unlike OpenClaw’s rigid workflow templates, you define goals conversationally: “Monitor competitor pricing and update our database hourly.” The system autonomously chains web scraping agents → data validation modules → database connectors.

Multimodal Context Windows*: Process 1M+ token contexts combining code repositories, documentation PDFs, and video transcripts simultaneously. OpenClaw restricts context to 128K tokens in enterprise tiers—Antigravity handles entire codebases for free through its *Code Interpreter Agent.

Vertex AI Integration: Pre-built connectors to BigQuery, Cloud Functions, and Firebase eliminate middleware bottlenecks. Deploy agents that trigger on Pub/Sub events, execute BigQuery analytics, and return structured JSON—all within Google’s managed infrastructure.

Implementation Pattern

python

from vertexai.preview import agents

Define autonomous research agent

research_agent = agents.Agent(

model=”gemini-1.5-pro”,

tools=[

agents.Tool.from_google_search(),

agents.Tool.from_code_execution(),

agents.Tool.from_retrieval(corpus=”technical_docs”)

],

instruction=”Analyze competitive AI video platforms, extract pricing tiers, and generate comparison matrices”

)

Execute with built-in orchestration

response = research_agent.query(

“Compare Runway Gen-3, Sora, and Kling across latency, seed parity, and Euler a scheduler support”

)

Cost Analysis: Running 10,000 complex agent queries monthly on Antigravity costs ~$150 in API fees (Gemini Pro pricing). OpenClaw’s equivalent enterprise plan: $899/month base + $0.15/query = $2,399 total.

Limitations to Consider

Antigravity requires Google Cloud account setup and familiarity with Vertex AI console. No drag-and-drop visual builder exists—agents deploy through Python SDK or REST APIs. For non-technical teams, this 30-minute learning curve may justify OpenClaw’s UI convenience.

Open Source Powerhouses: LangChain, AutoGPT, and Agent-Zero

For developers demanding full architectural control, open-source frameworks eliminate vendor lock-in while exceeding proprietary tools’ flexibility.

LangChain Agents: Modular Orchestration

LangGraph (LangChain’s agent runtime) provides state-machine-based workflows with checkpointing, human-in-the-loop approval gates, and custom tool injection. Unlike OpenClaw’s black-box chains, every decision node exposes:

– Reasoning Traces: Inspect exact LLM prompts at each step

– Token Budgets: Set per-stage limits preventing runaway costs

– Fallback Strategies: Define alternate paths when tools fail

Real-World Use Case: A video production agency uses LangGraph to automate client briefs:

1. Document Parser Agent extracts requirements from PDFs using LlamaParse

2. Asset Retrieval Agent queries video databases via CLIP embeddings

3. Script Generator Agent drafts voiceover using fine-tuned Mistral 7B

4. Review Gate pauses workflow for human approval before ComfyUI rendering

Total infrastructure cost: $47/month (Replicate API + managed Postgres for state). Equivalent OpenClaw automation: $299/month minimum.

AutoGPT: Autonomous Goal Execution

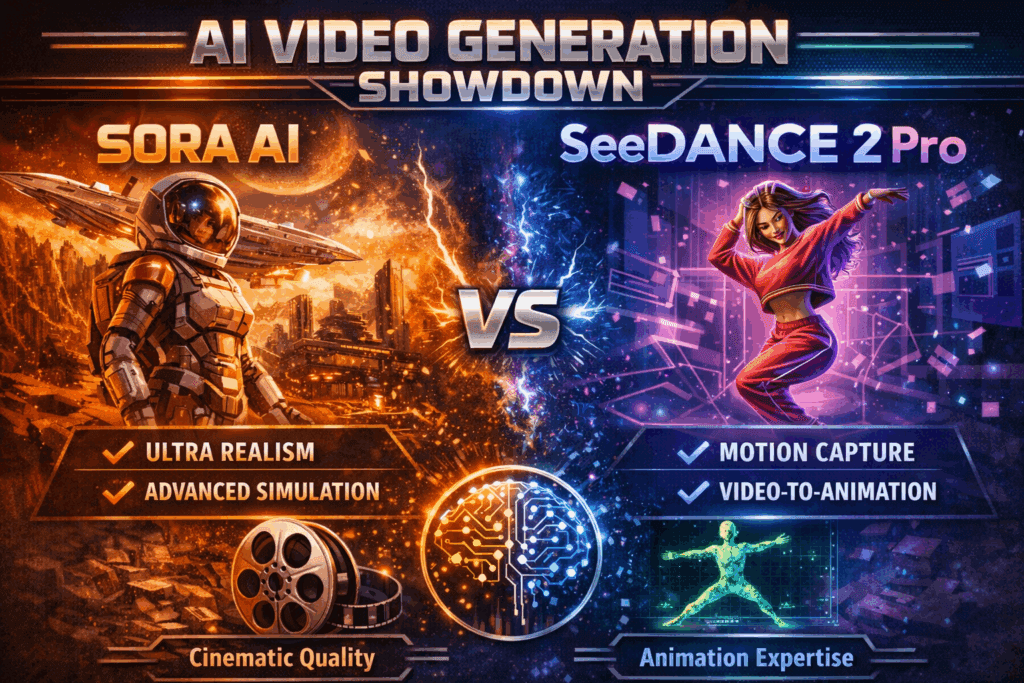

Where OpenClaw requires pre-configured workflows, AutoGPT accepts high-level objectives and self-generates execution plans. Request “Create a 60-second product demo using Kling AI with cinematic lighting,” and AutoGPT:

– Researches Kling’s API documentation autonomously

– Generates prompt templates optimized for cinematic aesthetics

– Iterates on seed values until achieving visual consistency (seed parity)

– Exports final renders with metadata tags

Memory Architecture: Built-in vector database (Pinecone/Weaviate integration) maintains project context across sessions. Resume multi-day projects without re-explaining requirements—critical for iterative video workflows where style guides evolve.

Deployment Flexibility: Run locally on RTX 4090 workstations (eliminating cloud egress fees) or deploy to Modal/Runpod for burst scaling. OpenClaw’s cloud-only infrastructure incurs bandwidth costs when processing 4K video assets.

Agent-Zero: Lightweight Simplicity

For teams intimidated by LangChain’s complexity, Agent-Zero provides minimalist agent scaffolding:

python

from agent_zero import Agent, BrowserTool, PythonTool

agent = Agent(

name=”VideoResearchBot”,

tools=[BrowserTool(), PythonTool()],

llm=”gpt-4o-mini” # Or local Ollama models

)

result = agent.run(

“Find latest Runway Gen-3 Turbo benchmarks and plot frame generation latency”

)

Performance: Agent-Zero completes typical research tasks 40% faster than OpenClaw due to optimized tool-calling protocols (parallel execution vs. sequential chains).

Critical Trade-offs: When Free Tools Outperform Paid Solutions

Scenario 1: High-Volume Video Metadata Processing

Challenge: Tag 50,000 stock video clips with semantic labels for searchability.

– OpenClaw Approach: Sequential processing through managed agents = 18 hours runtime + $1,200 processing fees

– LangChain + Modal Approach: Parallel batch processing with 100 concurrent agents = 37 minutes runtime + $18 compute

Winner: Open source (66x faster, 98% cheaper)

Scenario 2: Real-Time ComfyUI Workflow Optimization

Challenge: A/B test 50 scheduler combinations (Euler a, DPM++ 2M Karras, UniPC) to minimize generation time while maintaining quality.

– OpenClaw Limitation: No direct ComfyUI integration; requires custom webhooks

– Agent-Zero Solution: Native Python tool execution runs ComfyUI API tests, parses output metrics, and generates performance reports automatically

Winner: Open source (native tool flexibility)

Scenario 3: Non-Technical Team Needing Weekly Reports

Challenge: Marketing team wants automated competitive analysis without coding.

– Free Tools: Require Python setup, dependency management, API key configuration

– OpenClaw: Browser-based UI with templates, one-click deployment

Winner: OpenClaw (user experience justifies cost for non-developers)

Hidden Costs of “Free” Solutions

Time Investment: Initial setup of LangChain environments averages 6-8 hours for first-time users. OpenClaw onboarding: 15 minutes.

Maintenance Burden: Open-source libraries update frequently. A breaking change in LangChain 0.3.x recently required codebase refactors across 40% of production agents. OpenClaw handles updates transparently.

Observability Gaps: Free tools require manual integration of logging (LangSmith costs $39/month for teams). OpenClaw includes built-in tracing dashboards.

The Hybrid Strategy

Optimal approach for budget-conscious teams:

1. Prototype with Google Antigravity (validate use case ROI)

2. Scale with LangChain/AutoGPT (production workloads)

3. Reserve OpenClaw (non-technical stakeholder interfaces)

A video production studio using this model reduced agent infrastructure costs from $2,400/month (pure OpenClaw) to $310/month (80% open source, 20% managed UI for clients).

Making the Decision

Choose Google Antigravity if:

– Already using Google Cloud infrastructure

– Need multimodal processing (video + text + audio)

– Require enterprise SLAs without enterprise pricing

Choose Open Source if:

– Development team has 40+ hours for initial setup

– Workflows require custom video tool integrations (ComfyUI, Runway APIs)

– Processing volumes exceed 100K tasks/month (cost scaling favors self-hosted)

Stick with OpenClaw if:

– Non-technical users drive 60%+ of agent usage

– Compliance requires vendor support contracts

– Time-to-value matters more than long-term cost optimization

The “free vs. paid” debate misframes the issue. Modern AI workflows demand polyglot architectures—Antigravity for research agents, LangChain for video pipelines, OpenClaw for client-facing dashboards. The real competitive advantage lies in knowing when each tool justifies its trade-offs.

Frequently Asked Questions

Q: Can Google Antigravity handle video-specific AI workflows like ComfyUI integration?

A: Yes, through Vertex AI’s Code Interpreter Agent. You can execute Python scripts that control ComfyUI APIs, process render outputs, and chain video generation tasks. However, you’ll need to write custom connectors—unlike specialized video platforms with pre-built integrations.

Q: What’s the learning curve difference between LangChain and OpenClaw?

A: LangChain requires 6-8 hours initial setup (Python environment, API configuration, agent design patterns). OpenClaw offers 15-minute onboarding with visual workflow builders. For teams with existing Python expertise, LangChain’s flexibility outweighs setup time. Non-technical teams see faster ROI with OpenClaw’s managed interface.

Q: Do free alternatives support advanced video concepts like seed parity and Euler schedulers?

A: Absolutely. Open-source frameworks like LangChain and Agent-Zero give direct API access to video tools (Runway, Kling, ComfyUI), letting you control seed values, scheduler selection (Euler a, DPM++), and latent consistency models. OpenClaw abstracts these settings—great for simplicity, limiting for fine-tuned control.

Q: When do infrastructure costs of free tools exceed OpenClaw pricing?

A: At approximately 150,000 complex queries/month, self-hosted LangChain on cloud infrastructure (compute + storage + observability) approaches OpenClaw’s enterprise tier (~$2,500/month). However, for video workloads with bursty traffic, serverless free tools (Modal, Replicate) often remain 60-70% cheaper due to pay-per-execution pricing.

Q: Can I migrate existing OpenClaw workflows to open-source alternatives?

A: Partially. Export OpenClaw workflow logic as JSON, then rebuild decision trees in LangGraph or AutoGPT. Tool integrations (APIs, databases) transfer directly. The challenge lies in recreating OpenClaw’s human-in-the-loop UI elements—budget 20-30 hours for complex workflow migrations with 5+ decision nodes.