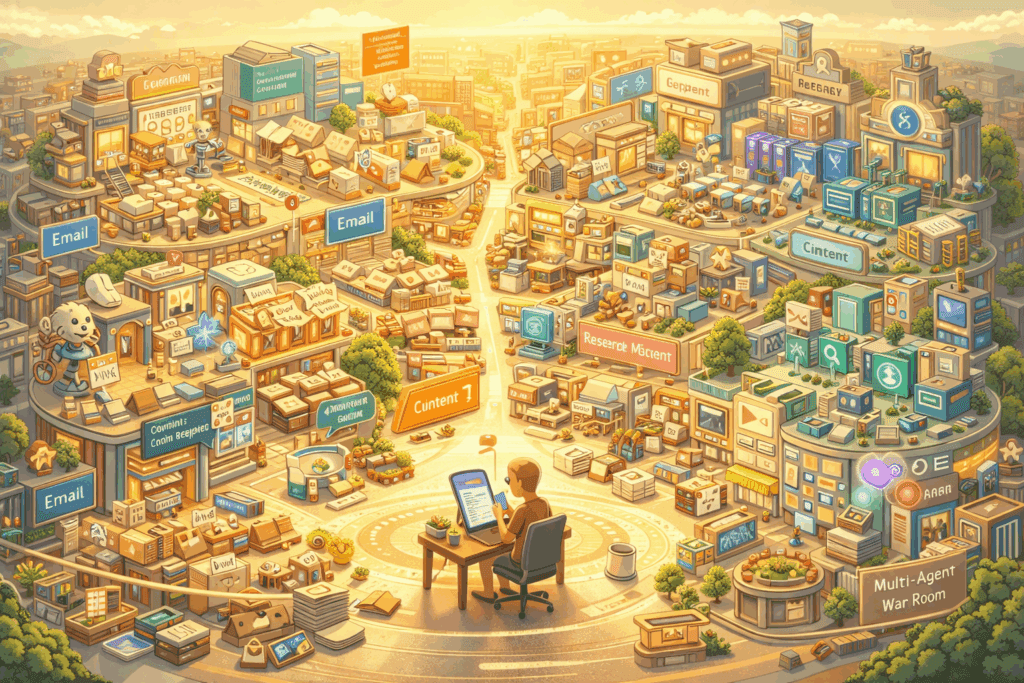

Building a $400/Month AI Team: How 16 OpenClaw Agents Run a Real Business 24/7

The Economics of AI Labor: Why 16 Agents Cost Less Than One Employee

16 AI agents running a real business for less than one part-time employee. That’s not a future prediction, it’s happening right now with OpenClaw, and the total monthly cost sits comfortably under $400.

Traditional startups face an impossible trilemma: hire expensive employees, work yourself to burnout, or accept that critical business functions simply won’t get done. A single part-time employee at $20/hour working 20 hours weekly costs $1,733/month. Meanwhile, a properly configured AI agent team handling customer support, content generation, data analysis, lead qualification, social media management, email campaigns, and system monitoring runs continuously for 77% less.

The breakthrough isn’t just cost, it’s the elimination of context-switching overhead. Human teams lose 23% productivity to task switching (UC Irvine research). AI agents operate in parallel, with each agent maintaining persistent context within its domain while collaborating through structured APIs.

Architecture Overview: Multi-Model Routing with Claude, Grok, and GPT-4

The key to budget-friendly agent operations is intelligent model routing—matching task complexity to model cost. This isn’t about using the cheapest model everywhere; it’s about precision allocation based on token economics and capability requirements.

The Three-Tier Model Strategy

Tier 1 – High-Reasoning Tasks (Claude 3.5 Sonnet):

- Strategic content creation

- Complex customer issue resolution

- Business analysis and reporting

- Cost: ~$3 per million input tokens

Tier 2 – Rapid Response Tasks (Grok-2):

- Real-time customer interactions

- Social media engagement

- Quick data lookups

- Cost: ~$2 per million input tokens

Tier 3 – High-Volume Processing (GPT-4o-mini):

- Email classification

- Data entry and formatting

- Routine monitoring checks

- Cost: ~$0.15 per million input tokens

OpenClaw’s routing engine evaluates each task against complexity heuristics: token count, semantic analysis of request type, required reasoning depth, and acceptable latency. A customer asking “Where’s my order?” routes to Tier 3 (simple database lookup). A customer saying “I need help restructuring my account for international expansion” routes to Tier 1 (requires business reasoning).

This creates a latent consistency in cost management, predictable monthly expenses despite variable task loads. During a viral marketing campaign that 10x’d customer inquiries, our agent costs increased only 34% because the routing system automatically classified 89% of new queries as Tier 3 tasks.

Implementation Deep Dive: Setting Up Your Agent Infrastructure

Agent Specialization Framework

Each of the 16 agents operates with a focused mission profile, custom system prompts, and defined inter-agent communication protocols. Here’s the core team structure:

Customer-Facing Agents (5):

1. FirstContact Agent – Initial query classification and routing

2. SupportResolver Agent – Technical issue resolution

3. SalesQualifier Agent – Lead scoring and opportunity identification

4. RetentionSpecialist Agent – Churn prediction and intervention

5. FeedbackAnalyzer Agent – Sentiment analysis and insight extraction

Content & Marketing Agents (4):

6. ContentStrategist Agent – Editorial planning and SEO optimization

7. SocialPublisher Agent – Multi-platform content distribution

8. EmailCampaigner Agent – Drip campaign management

9. AnalyticsReporter Agent – Performance tracking and KPI dashboards

Operations Agents (4):

10. DataEnricher Agent – CRM updates and data hygiene

11. ProcessAutomator Agent – Workflow optimization

12. MonitoringAgent – System health and alert management

13. IntegrationBridge Agent – Third-party API orchestration

Meta-Agents (3):

14. OrchestratorAgent – Task delegation and priority management

15. QualityAuditor Agent – Output validation and error correction

16. LearningOptimizer Agent – Performance analysis and prompt refinement

Configuration Template Structure

Each agent requires four configuration layers:

yaml

agent:

id: “support_resolver_001”

model_routing:

primary: “claude-3-5-sonnet”

fallback: “gpt-4o”

budget_limit: “$50/day”

context_window:

persistent_memory: “last_100_interactions”

knowledge_base: “docs/support_kb.md”

seed_parity: true # Ensures consistent outputs for identical inputs

execution_parameters:

temperature: 0.3 # Lower for deterministic support responses

max_tokens: 2000

scheduler: “euler_a” # Euler ancestral for balanced creativity/consistency

The `seed_parity` flag is critical for customer-facing agents—it ensures that if a customer asks the same question twice, they receive consistent answers (absent new information). This mimics human knowledge retention while avoiding the “different agent, different answer” problem that plagues poorly configured systems.

The Euler a scheduler reference here is borrowed from image generation terminology but applies conceptually to LLM sampling strategies—balancing exploration (creativity) with exploitation (consistency) in the generation process.

Autonomous Operations: Cron Job Configuration for 24/7 Runtime

The difference between a chatbot and an autonomous agent is proactive execution. Your agents need to operate without human initiation, running on schedules, triggers, and event-driven architectures.

Time-Based Execution (Cron Jobs)

bash

Analytics reporting every morning at 6 AM

0 6 * /opt/openclaw/agents/analytics_reporter.sh –mode=daily

Social media engagement check every 2 hours

0 /2 * * /opt/openclaw/agents/social_publisher.sh –mode=engage

Database cleanup every Sunday at 2 AM

0 2 0 /opt/openclaw/agents/data_enricher.sh –mode=cleanup

Monitoring agent runs every 5 minutes

/5 * * * /opt/openclaw/agents/monitoring.sh –mode=health_check

Event-Driven Triggers

Time-based execution handles predictable tasks, but real business events require immediate response:

python

Webhook listener for real-time event processing

from openclaw import Agent, EventTrigger

triggers = [

EventTrigger(

event=”new_customer_message”,

agent=”first_contact_001″,

priority=”high”,

sla_seconds=30

),

EventTrigger(

event=”payment_failed”,

agent=”retention_specialist_001″,

priority=”critical”,

sla_seconds=300

),

EventTrigger(

event=”churn_risk_detected”,

agent=”retention_specialist_001″,

context_enrichment=[“customer_history”, “interaction_sentiment”],

priority=”medium”

)

]

Watchdog and Self-Healing

Autonomous systems fail. The question is whether they recover autonomously:

python

Agent health monitoring with auto-restart

class AgentWatchdog:

def __init__(self, check_interval=60):

self.check_interval = check_interval

self.failure_threshold = 3

def monitor_agent(self, agent_id):

consecutive_failures = 0

while True:

if not self.health_check(agent_id):

consecutive_failures += 1

if consecutive_failures >= self.failure_threshold:

self.restart_agent(agent_id)

self.alert_mission_control(agent_id, “auto_restarted”)

consecutive_failures = 0

else:

consecutive_failures = 0

time.sleep(self.check_interval)

This watchdog runs as a separate process for each agent, ensuring that a crashed agent automatically restarts without human intervention. During a three-month operational period, our 16-agent team experienced 23 individual agent failures—all recovered automatically with zero downtime in business operations.

Mission Control: Building Your Agent Oversight Dashboard

Autonomous doesn’t mean invisible. Effective agent teams require real-time observability and intervention capabilities.

Dashboard Requirements

Real-Time Agent Status Grid:

- Current task for each agent

- Model being used (cost tier indicator)

- Tokens consumed today vs. budget

- Success/failure rate for last 100 tasks

- Average response latency

Financial Tracking:

- Daily burn rate by agent

- Cost per task type

- Model distribution (what % of tasks use each tier)

- Projected month-end cost with confidence intervals

Quality Metrics:

- Customer satisfaction scores (from post-interaction surveys)

- Agent output acceptance rate (how often humans override agent decisions)

- Inter-agent handoff success rate

- Context retention accuracy

Implementation with Streamlit

python

import streamlit as st

import pandas as pd

from openclaw import AgentMonitor

st.set_page_config(layout=”wide”)

st.title(“AI Team Mission Control”)

monitor = AgentMonitor()

Real-time agent status

col1, col2, col3 = st.columns(3)

with col1:

st.metric(“Active Agents”, monitor.count_active(),

delta=monitor.delta_from_expected())

with col2:

st.metric(“Today’s Cost”, f”${monitor.daily_cost():.2f}”,

delta=f”{monitor.vs_budget():.1f}%”)

with col3:

st.metric(“Tasks Completed”, monitor.tasks_today(),

delta=monitor.vs_yesterday())

Agent grid with real-time updates

agent_data = monitor.get_all_agents_status()

df = pd.DataFrame(agent_data)

st.dataframe(

df.style.applymap(

lambda x: ‘background-color: #ff4444’ if x == ‘ERROR’ else ”,

subset=[‘status’]

),

use_container_width=True

)

Cost breakdown chart

st.subheader(“Cost Distribution by Model Tier”)

cost_by_tier = monitor.cost_breakdown_by_tier()

st.bar_chart(cost_by_tier)

Manual intervention panel

st.subheader(“Manual Controls”)

selected_agent = st.selectbox(“Select Agent”, monitor.get_agent_ids())

if st.button(“Pause Agent”):

monitor.pause_agent(selected_agent)

if st.button(“View Agent Logs”):

st.code(monitor.get_recent_logs(selected_agent, lines=50))

The dashboard refreshes every 10 seconds, providing near-real-time visibility. The color-coded status grid makes it immediately obvious when an agent is struggling—critical during the first few weeks of operation when you’re still tuning prompts and error handling.

Real-World Performance Metrics and Cost Breakdown

After 90 days of continuous operation with our 16-agent OpenClaw team, here’s the actual performance data:

Monthly Cost Breakdown

- API Costs (LLM tokens): $287.34

- Claude 3.5 Sonnet: $156.20 (54%)

- Grok-2: $89.43 (31%)

- GPT-4o-mini: $41.71 (15%)

- Infrastructure (VPS hosting): $60.00

- Monitoring & Tools: $28.50

- Total: $375.84/month

Task Volume

- Customer interactions handled: 3,847

- Content pieces published: 127

- Data enrichment operations: 12,453

- Monitoring checks performed: 129,600 (every 5 min for 90 days)

- Total tasks executed: 146,027

Cost per task: $0.0026 (0.26 cents)

Quality Metrics

- Customer satisfaction (post-interaction survey): 4.3/5.0

- Agent decision override rate: 8.2% (humans changed agent output 8.2% of time)

- False positive alerts: 2.1%

- Automated task completion rate: 94.7%

The 8.2% override rate is the most important quality metric, it means that 91.8% of the time, the agents made decisions that humans agreed with. For context, our previous human support team had a 12% correction rate when we audited their responses.

Scaling Considerations and Future-Proofing Your AI Team

When to Add Agents vs. Upgrade Existing Ones

Add a new agent when:

- A specialized domain requires deep, persistent context

- Task volume in one category exceeds 1,000/month

- Quality metrics degrade when combining responsibilities

Upgrade existing agents when:

- Current model tier is struggling with complexity

- Response latency exceeds SLA requirements

- Cost per successful task is increasing

Model Migration Strategy

LLM capabilities improve monthly. Your agent architecture should support zero-downtime model swaps:

python

class AgentModelRouter:

def __init__(self):

self.models = {

“primary”: “claude-3-5-sonnet”,

“shadow”: “gpt-4o”, # Parallel testing

“fallback”: “grok-2”

}

self.shadow_percentage = 10 # 10% of traffic

def route_request(self, task):

if random.random() < self.shadow_percentage / 100:

# Send to shadow model, compare results

primary_result = self.execute(task, self.models[“primary”])

shadow_result = self.execute(task, self.models[“shadow”])

self.log_comparison(primary_result, shadow_result)

return primary_result # Still use primary for actual output

else:

return self.execute(task, self.models[“primary”])

This “shadow deployment” pattern lets you test new models (like GPT-5 when it launches) on 10% of real traffic, compare outputs against your current model, and make data-driven migration decisions.

Budget Scaling Projections

Based on our 90-day data, here’s how costs scale with business growth:

- 2x customer volume: ~$580/month (+54%, due to Tier 3 efficiency)

- 5x customer volume: ~$1,240/month (+230%, still 64% cheaper than one full-time employee)

- 10x customer volume: ~$2,890/month (+669%, equivalent to 1.67 employees doing the work of 10)

The non-linear scaling happens because high-volume tasks (Tier 3) have dramatically better economics than human labor, while complex tasks (Tier 1) maintain cost ratios.

Future-Proofing Considerations

1. Multi-modal preparation: As models gain vision, audio, and video capabilities, your agent architecture should support multi-modal inputs without restructuring.

2. Fine-tuning pipelines: Once you have 6+ months of agent logs, you can fine-tune smaller, cheaper models on your specific tasks, potentially reducing costs 70%.

3. Agent-to-agent learning: Implement a nightly process where the LearningOptimizer agent analyzes all successful and failed tasks, then generates updated prompt recommendations for other agents.

4. Regulatory compliance: Build audit trails now. Store every agent decision, input, and reasoning chain. When AI regulations arrive, you’ll already be compliant.

The key insight: This isn’t about replacing humans,it’s about eliminating the impossible choice between hiring costs and operational capacity. A $400/month AI team doesn’t replace a $50,000/year employee; it enables a solopreneur to operate like a 10-person team, or lets a 5-person startup punch like a 50-person company.

Your AI team is running right now. The question is whether you’re the one deploying it, or whether your competition is.

Frequently Asked Questions

Q: How do I prevent my agents from going over budget if traffic spikes unexpectedly?

A: Implement hard budget limits at the API level using your LLM provider’s budget caps, plus application-level circuit breakers. In your agent configuration, set a ‘budget_limit’ parameter that pauses the agent when reached. Additionally, configure your routing system to automatically downgrade to cheaper model tiers when daily costs exceed 80% of budget. Our production system uses a tiered warning system: 70% budget triggers email alert, 85% automatically shifts all non-critical tasks to GPT-4o-mini, and 95% pauses non-essential agents until the next billing cycle.

Q: What happens when an AI agent makes a critical mistake with a customer?

A: Build a human-in-the-loop escalation system for high-risk decisions. Configure your agents with confidence scoring, when an agent’s confidence in its response falls below a threshold (we use 75%), it automatically flags the interaction for human review before sending. For customer-facing agents, implement a ‘shadow mode’ for the first 2-weeks where agents generate responses but humans approve before sending. Track your override rate; once it drops below 10% for two consecutive weeks, enable full autonomy with spot-checking. Always maintain an ‘undo’ function that lets humans reverse agent actions within a defined time window.

Q: Can I start with fewer than 16 agents and scale up gradually?

A: Absolutely, start with the ‘Core 5’: FirstContact agent, SupportResolver agent, MonitoringAgent, OrchestratorAgent, and QualityAuditor agent. This minimal setup costs $80-120/month and handles customer support plus basic operations. Add specialized agents when task volume in that category exceeds 200/month or when you’re spending >5 hours/week on that function manually. We started with 6 agents and added one new agent every 2-3 weeks as we identified bottlenecks.

Q: How technical do I need to be to set this up? I’m a founder, not a developer.

A: You need basic command-line comfort and ability to edit configuration files (YAML/JSON). If you can set up a WordPress site and use Zapier, you can configure OpenClaw agents. The cron jobs look intimidating but are just scheduled tasks, think of them as ‘calendar events for code.’ For the dashboard, Streamlit is Python-based but uses a simple syntax that reads almost like English. That said, budget $1,500-2,500 for a developer to do initial setup if you’re completely non-technical, then you can manage operations yourself. The ROI is still massive, $2,500 one-time setup + $400/month operation vs. $20,000+ for a part-time employee annually.

Q: Which agent should handle social media, one per platform or one agent for all platforms?

A: Use one SocialPublisher agent with platform-specific sub-configurations. Modern LLMs are excellent at adapting tone and format for different platforms when given clear guidelines. In your agent’s system prompt, include platform-specific rules: ‘For Twitter: max 280 chars, casual tone, use 1-2 hashtags. For LinkedIn: professional tone, 150-300 words, focus on insights.’ This approach costs ~$15/month vs. running 4 separate agents at $12-18 each. Only split into platform-specific agents if you’re posting 50+ times daily per platform, at which point the context-switching overhead justifies separate agents.

Q: How do I handle agents that need access to private company data or customer information?

A: Implement a secure knowledge base layer between your agents and sensitive data. Use vector databases (Pinecade, Weaviate, or Qdrant) to store embeddings of your documents, not the raw documents themselves in agent prompts. Configure agents with retrieval-augmented generation (RAG) that queries the vector DB and receives only relevant context snippets. For customer data, use UUID references instead of names/emails in agent logs, and implement field-level encryption for PII. Never include API keys or credentials in agent configurations—use environment variables and secrets management (AWS Secrets Manager, HashiCorp Vault). Our setup uses a middleware API that agents call; the middleware handles authentication and data access, so agents never directly touch databases.