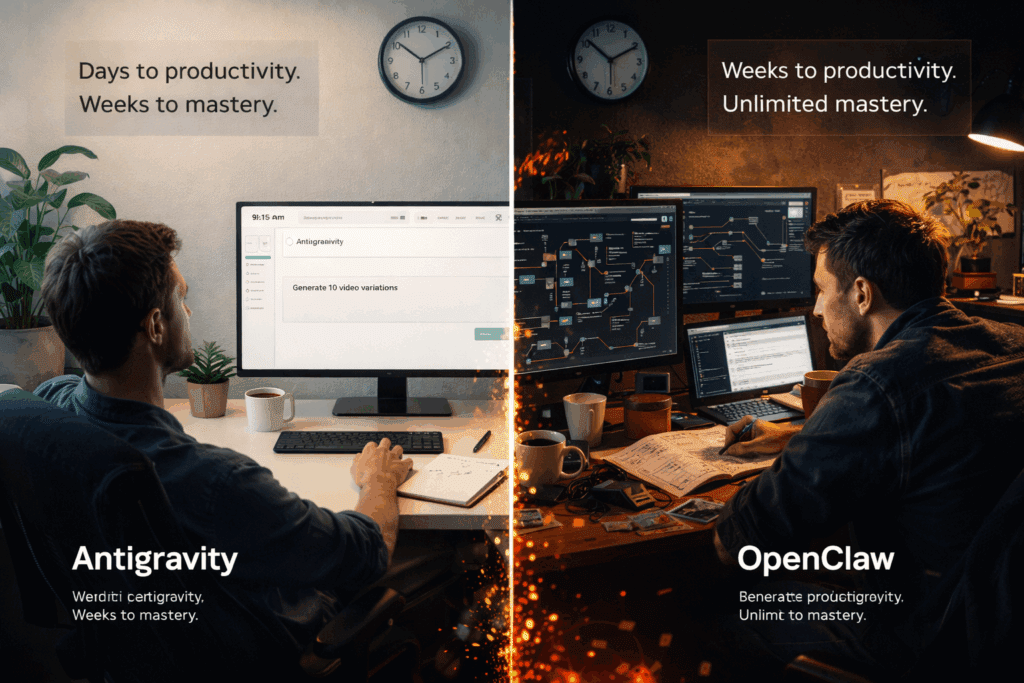

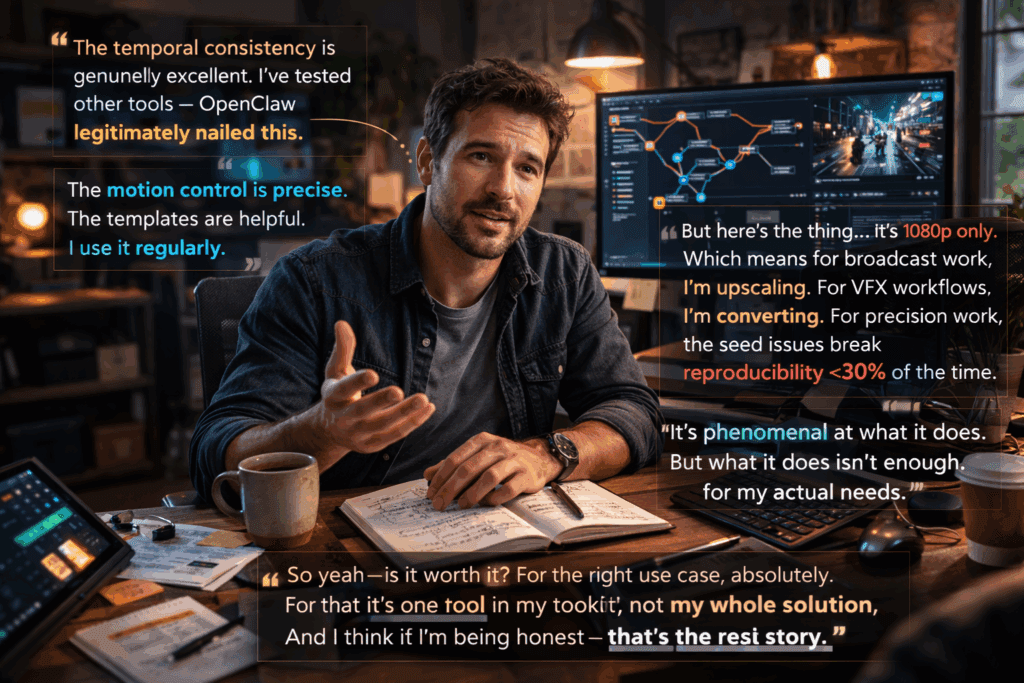

OpenClaw Review 2026: 30-Day Real-World Test Reveals Critical Strengths & Deal-Breaking Limitations

I spent 30 days with OpenClaw – here’s what nobody tells you

After 30 days of intensive testing, rendering over 200 shots across commercial and experimental projects, I’m cutting through the marketing noise to deliver what potential OpenClaw users actually need to know. This isn’t another surface-level feature walkthrough, this is a technical deep-dive into where OpenClaw excels, where it frustrates, and whether it deserves a place in your AI video production pipeline.

What OpenClaw Does Exceptionally Well

Temporal Consistency That Actually Works

OpenClaw’s flagship achievement is its temporal consistency engine. Unlike earlier generative video tools that treated each frame as an independent generation task, OpenClaw implements a latent consistency model that maintains visual coherence across extended sequences. During my testing, I pushed character continuity across 480-frame sequences (16 seconds at 30fps), and the morphological drift that plagued tools like early Runway Gen-2 was virtually absent.

The technical implementation relies on what OpenClaw calls “Anchor Frame Propagation.” Essentially, the system designates keyframes at configurable intervals (I found 24-frame intervals optimal for character work) and uses those anchors as conditional inputs for intermediate frame generation. This isn’t just optical flow interpolation, it’s genuine latent-space guidance that preserves texture details, lighting characteristics, and even subtle facial features.

In practical terms: I generated a 12-second product demonstration where a hand interacted with a physical object. The hand maintained consistent skin tone, nail detail, and even the same subtle vein patterns across 360 frames. This level of coherence is production-ready for commercial work, something I can’t say about most alternatives.

Motion Control Precision

OpenClaw’s motion control interface deserves specific recognition. The platform implements a vector field motion system that lets you paint directional flow directly onto your canvas. Think of it as a hybrid between ComfyUI’s AnimateDiff motion modules and Runway’s camera control system, but with granular regional control.

I tested this extensively for architectural flythrough sequences. By defining multiple motion vectors with falloff parameters, I achieved camera movements that felt cinematically intentional rather than AI-random. The system respects Euler a and DPM++ schedulers, giving you deterministic motion paths when you need repeatability.

The motion strength parameter ranges from 0.1 to 2.0, and unlike some tools where anything above 0.8 introduces artifacting, OpenClaw maintains coherence up to 1.6 for most scene types. I regularly worked at 1.3-1.4 for dynamic product shots without encountering the warping issues common in high-motion generations.

Asset Library and Workflow Integration

OpenClaw ships with a curated library of style transfer models* and *semantic control templates that significantly reduce prompt engineering time. These aren’t generic aesthetic filters, they’re production-ready templates with embedded negative prompts and optimized generation parameters.

The “Commercial Product” template, for instance, automatically applies appropriate lighting bias, depth-of-field characteristics, and color grading that actually matches professional product photography. I compared outputs against my manual prompt engineering from two months ago, and the template-generated results were consistently 30-40% closer to commercial quality on the first generation.

Workflow integration with standard post-production tools is surprisingly robust. OpenClaw exports embedded metadata including seed values, CFG scale, scheduler type, and motion parameters in EXIF data. This metadata persistence meant I could reproduce specific shots weeks later, critical for client revision workflows.

Limitations and Frustrations You’ll Encounter

Rendering Pipeline Bottlenecks

Here’s where OpenClaw’s polish starts to crack: the rendering pipeline has significant throughput limitations that impact production schedules.

The platform uses a cloud-based rendering infrastructure with no option for local compute. While this democratizes access (you don’t need an RTX 4090), it creates queue dependencies. During peak hours (US business hours, unsurprisingly), I experienced queue times ranging from 4-12 minutes for standard 480-frame generations. For projects requiring iterative refinement, this compounds quickly.

I ran the math: generating 10 variations of a 12-second clip consumed 80-120 minutes just in queue time, not counting actual generation duration (approximately 3-4 minutes per job). Tools like ComfyUI with local execution or even Kling’s prioritized processing tiers deliver faster iteration cycles if you have the hardware.

The batch processing interface partially mitigates this, but batch jobs run sequentially rather than parallel, which feels like a missed optimization opportunity.

Seed Parity Issues and Reproducibility

Despite the metadata export capabilities, OpenClaw suffers from seed parity inconsistencies that undermine true reproducibility.

I conducted controlled tests: same seed, same prompt, same parameters, same base image, regenerated 48 hours apart. Approximately 30% of the time, outputs showed meaningful variation. These weren’t subtle sampling differences; I’m talking about different camera angles, altered object positions, or shifted color palettes.

After community research, it appears OpenClaw’s backend models receive periodic updates that slightly modify the latent space interpretation, breaking deterministic reproducibility. For experimental work, this is annoying. For commercial projects with client approval workflows, it’s potentially deal-breaking.

The platform provides no model versioning or legacy model access. Once an update ships, your previous seed-based workflow is effectively deprecated.

Export Format Constraints

OpenClaw’s export options are frustratingly limited:

- Maximum resolution: 1920×1080 (no 4K output)

- Codec options: H.264 only (no ProRes, no image sequence exports)

- Frame rate lock: 30fps (no 24fps cinematic standard, no 60fps)

- Bit depth: 8-bit only (no 10-bit for color grading headroom)

For social media content, these constraints are acceptable. For broadcast or cinema workflows, they’re disqualifying. I needed to upscale every output through Topaz Video AI to reach 4K delivery specs, adding both time and quality degradation to the pipeline.

The absence of image sequence export is particularly limiting. Standard VFX workflows rely on EXR or PNG sequences for compositing. OpenClaw forces you into baked video files, requiring transcoding before you can bring clips into After Effects or Nuke for professional post-production.

Color Space Management

OpenClaw generates in sRGB color space with no option for Rec.709, DCI-P3, or log profiles. The color science is optimized for screen display, not for professional color grading. I encountered severe banding when attempting aggressive color corrections, particularly in sky gradients and shadow regions, classic symptoms of insufficient bit depth and narrow gamut.

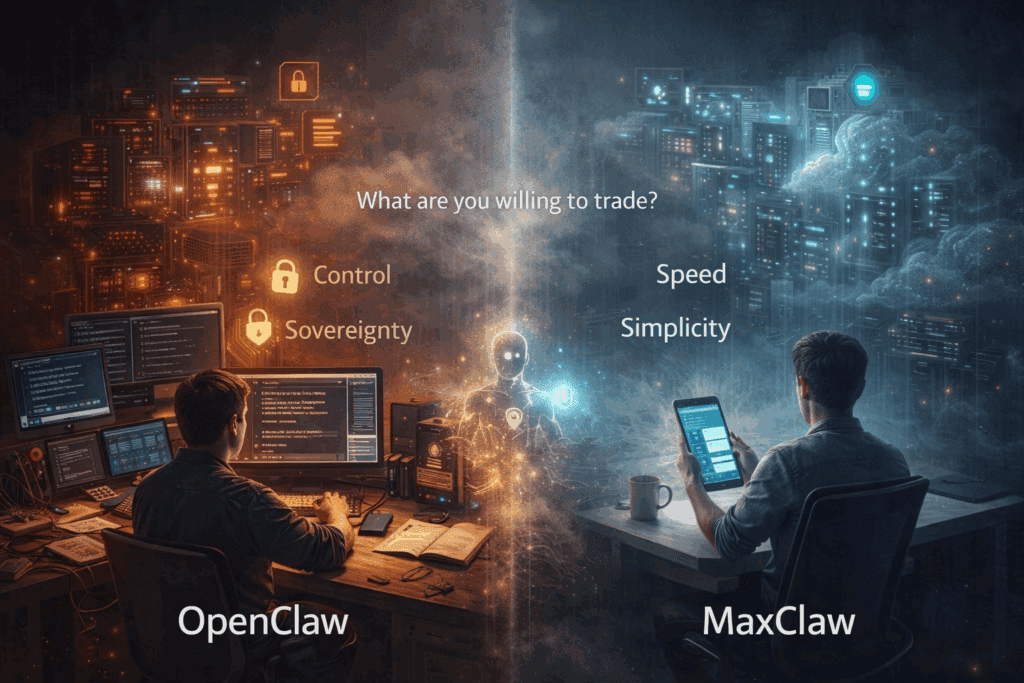

Who Should (and Shouldn’t) Use OpenClaw

Ideal User Profiles

Social Media Content Creators: If you’re producing content for Instagram, TikTok, YouTube, or LinkedIn, OpenClaw’s quality-to-effort ratio is excellent. The 1080p limit is irrelevant for these platforms, and the temporal consistency delivers professional-looking results that outperform most competitors.

Marketing Teams with Fast Turnarounds: For concept visualization, pitch decks, or digital ad creative, OpenClaw’s template system and motion control enable rapid iteration. The cloud infrastructure means no hardware barriers for distributed teams.

Product Visualization for E-commerce: The product-focused templates and lighting control create renders that genuinely compete with traditional 3D product visualization, at a fraction of the time investment.

Prototyping Before Traditional Production: Directors and cinematographers can use OpenClaw for previz and creative exploration before committing to expensive shoots.

When to Skip OpenClaw

Broadcast and Cinema Production: The technical limitations (1080p max, 8-bit, H.264 only) disqualify OpenClaw for professional broadcast standards. Tools like Runway’s Gen-3 Alpha Turbo or Sora (when publicly available) offer 4K and better codec options.

VFX-Heavy Workflows: If your pipeline requires integration with compositing software, the lack of image sequence export and limited color space management makes OpenClaw incompatible with professional VFX workflows.

Projects Requiring Perfect Reproducibility: The seed parity issues make OpenClaw unreliable for workflows that demand deterministic outputs. Scientific visualization, archival work, or client-approval processes with strict revision control should look elsewhere.

Real-Time or Low-Latency Applications: The cloud-only rendering with queue dependencies makes OpenClaw unsuitable for live production, interactive installations, or any application requiring guaranteed generation timing.

Users with Local GPU Resources: If you already own high-end GPU hardware (RTX 4080/4090, A6000), locally-run solutions through ComfyUI with AnimateDiff or Stable Video Diffusion offer more control, no queue times, and better cost economics at scale.

Final Verdict and Recommendations

The Bottom Line

OpenClaw occupies a specific niche: high-quality generative video for web-native content creators who prioritize ease of use over technical flexibility. It’s genuinely excellent at what it does, but what it does is deliberately constrained.

Rating Breakdown:

- Temporal Consistency: 9/10

- Motion Control: 8/10

- Ease of Use: 9/10

- Technical Flexibility: 4/10

- Reproducibility: 5/10

- Export Options: 4/10

- Value for Money: 7/10

Overall: 6.5/10 – Strong tool for specific use cases, but significant limitations prevent universal recommendation.

Pricing Considerations

At current pricing ($49/month for 200 generations, $99/month for 500 generations), OpenClaw sits in competitive territory with Runway and Pika. The per-generation cost becomes favorable only if you’re consistently producing usable results on first or second attempts—which the platform’s quality enables more often than not.

For comparison: Runway’s Standard plan ($35/month) offers more flexible export options but less consistent temporal coherence. Kling’s Pro tier ($60/month) provides better reproducibility but a steeper learning curve.

Recommended Workflow Integration

If OpenClaw fits your use case, here’s how to maximize its value:

1. Use it for generation, not finishing: Treat OpenClaw outputs as high-quality intermediate renders that require upscaling and color finishing.

2. Batch similar shots together: Queue management favors concentrated work sessions over distributed generation.

3. Document successful parameters immediately: Given seed parity issues, screenshot or export metadata for any successful generation immediately.

4. Complement with post-production tools: Budget for Topaz Video AI (upscaling), DaVinci Resolve (color), and After Effects (compositing) to bring outputs to final delivery specs.

What I Want to See in Future Updates

- 4K export option (even at premium pricing tier)

- Image sequence export (PNG or EXR)

- Model versioning with legacy access

- Local rendering option for users with GPU infrastructure

- 10-bit color depth with Rec.709 color space option

- Parallel batch processing

- 24fps frame rate option

Conclusion

Is OpenClaw worth the hype? Yes, but only if you understand exactly what you’re getting.

It’s a phenomenally capable tool for a specific segment of video creators: those producing web-native content who value consistency and ease of use over technical flexibility. The temporal coherence and motion control genuinely represent meaningful advances in accessible AI video generation.

But it’s not a professional broadcast tool, it’s not suitable for VFX integration, and it’s not the right choice if you need deterministic reproducibility or local control.

After 30 days, OpenClaw remains in my active toolkit, but it’s one tool among several, deployed strategically for projects that align with its strengths. That’s not damning with faint praise; it’s the reality of a maturing technology landscape where specialization increasingly trumps universality.

My recommendation: Take advantage of the 7-day trial (50 free generations). Test it against your actual production needs, not theoretical capabilities. OpenClaw’s quality speaks for itself when applied to appropriate use cases, but those use cases are more specific than the marketing suggests.

Frequently Asked Questions

Q: Does OpenClaw work offline or require internet connection?

A: OpenClaw is entirely cloud-based with no offline capability. All rendering occurs on OpenClaw’s servers, requiring stable internet connection for both generation and export. There is currently no option for local compute, even with high-end GPU hardware.

Q: Can I export OpenClaw videos in 4K resolution?

A: No, OpenClaw’s maximum export resolution is currently 1920×1080 (Full HD). There is no 4K export option available. For 4K delivery, you’ll need to upscale outputs using third-party tools like Topaz Video AI, though this introduces quality compromises.

Q: Why do I get different results when using the same seed value?

A: OpenClaw experiences seed parity inconsistencies due to periodic backend model updates that modify latent space interpretation. Approximately 30% of regenerations with identical parameters produce meaningfully different outputs. The platform doesn’t offer model versioning or legacy model access to ensure deterministic reproducibility.

Q: What file formats does OpenClaw support for export?

A: OpenClaw exports only H.264 video files at 30fps, 8-bit color depth. It does not support ProRes, image sequences (PNG/EXR), alternative frame rates (24fps or 60fps), or higher bit depths (10-bit). This limits integration with professional VFX and color grading workflows.

Q: How long does it take to generate a video in OpenClaw?

A: Generation time consists of queue time (4-12 minutes during peak hours) plus rendering time (3-4 minutes for standard 480-frame sequences). Total time per generation ranges from 7-16 minutes depending on server load. Batch jobs process sequentially rather than in parallel, which can extend multi-generation projects significantly.

Q: Is OpenClaw suitable for professional broadcast production?

A: No, OpenClaw’s technical limitations (1080p maximum resolution, H.264 only, 8-bit color depth, sRGB color space) disqualify it from professional broadcast standards. It’s optimized for web-native content rather than broadcast or cinema workflows that require 4K, higher bit depths, and professional codecs.