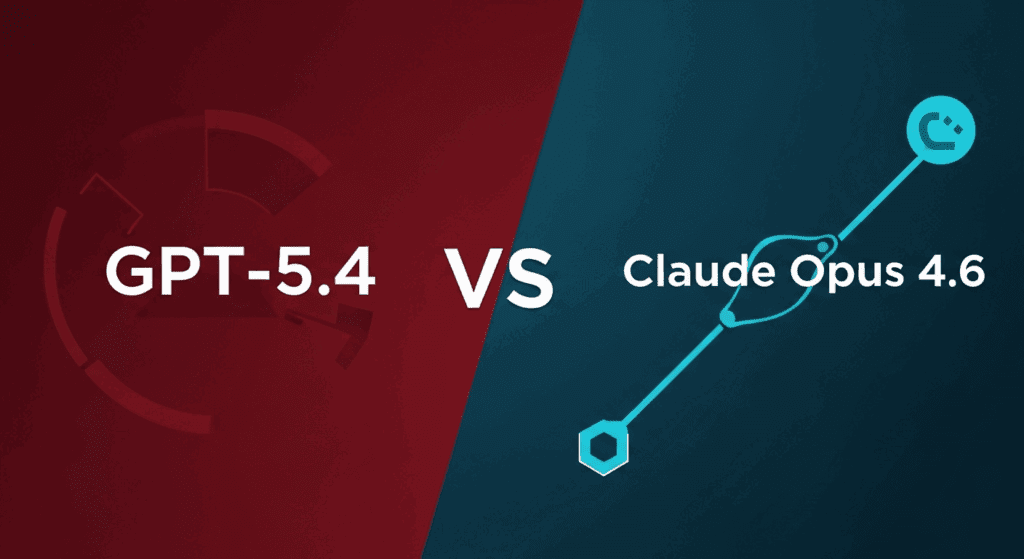

GPT-5.4 vs Claude Opus 4.6 Head-to-Head: Which Model Wins at Autonomous SSH Deployment and PR Merges in OpenClaw?

Testing which AI model handles SSH deployment, bug fixes, and PR merges autonomously isn’t just academic curiosity—it’s the difference between an agent that ships production code and one that generates expensive API calls before failing at credential handling. When you’re building autonomous coding agents with frameworks like OpenClaw, your foundation model choice directly impacts success rates, token consumption, and whether your agent can actually close the loop on complex multi-step engineering tasks.

This head-to-head evaluation puts GPT-5.4 and Claude Opus 4.6 through identical real-world scenarios: remote server deployment via SSH, automatic debugging and skill generation, and full PR lifecycle management with minimal human oversight. The results reveal surprising differences in architectural reasoning, error recovery patterns, and which model actually completes tasks versus which one provides eloquent explanations for why it can’t.

The Autonomous Agent Deployment Challenge: Why Model Selection Matters

Autonomous coding agents represent a fundamental shift from code generation to code deployment. Traditional LLM applications generate snippets that humans integrate. Production agent systems must navigate authentication layers, handle environmental inconsistencies, debug runtime failures, and interact with version control systems, all while maintaining state across sessions that can span hours.

The model powering these agents becomes the inference engine for decision trees with dozens of branches. When your agent encounters a `Permission denied (publickey)` error during SSH deployment, does it:

– Hallucinate a solution and retry with invalid credentials?

– Request human intervention immediately?

– Systematically diagnose the issue by checking key permissions, SSH config, and authentication methods?

– Generate a recovery skill that handles this error class in future deployments?

These behavioral differences emerge from how models handle context retention, tool use APIs, and structured reasoning under uncertainty. GPT-5.4’s reported improvements in long-context coherence and Opus 4.6’s enhanced reasoning capabilities both claim advantages here, but only empirical testing reveals ground truth.

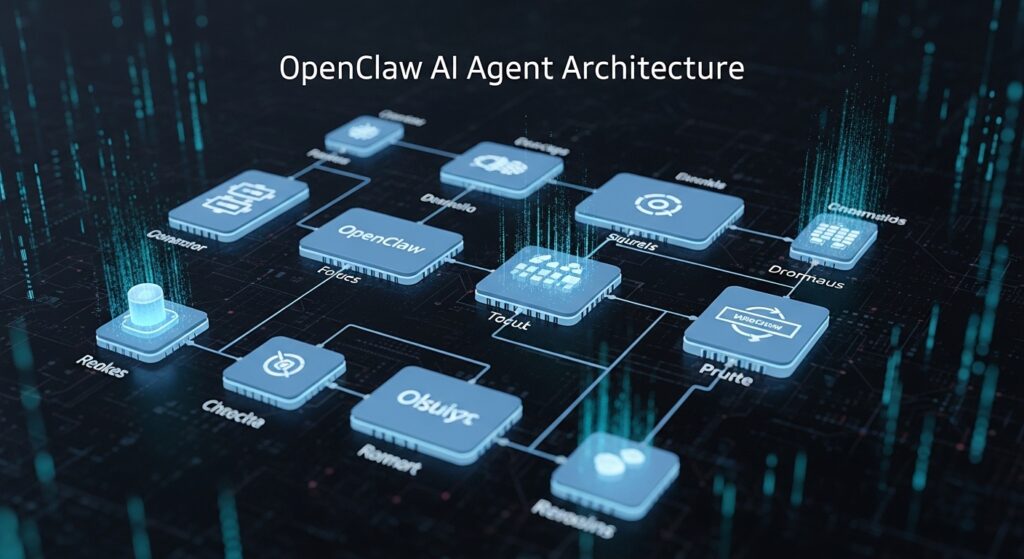

Test Environment Setup: OpenClaw Framework and Evaluation Criteria

OpenClaw provides the agent orchestration layer with Docker containerization, SSH credential management, GitHub API integration, and a skill library system. Each model was tested with identical:

– System prompts: 2,400 tokens defining agent capabilities, tool schemas, and safety constraints

– Context windows: Populated with repository structure, deployment configs, and error logs

– Tool access: SSH client, Git operations, file system manipulation, and GitHub API wrappers

– Timeout constraints: 15-minute maximum per task with 3-retry limit

Success metrics focused on task completion rates, token efficiency (cost per completed task), error recovery without human intervention, and code quality of generated fixes. Each model performed 30 deployment scenarios, 25 debugging challenges, and 20 PR workflow tasks.

Temperature was set to 0.2 for both models to minimize non-deterministic behavior, with seed parity maintained where supported to enable reproducible comparisons—though this proved more reliable with GPT-5.4’s seed implementation than Opus 4.6’s current sampling approach.

Round 1: Remote SSH Deployment Automation – Connection Handling and Error Recovery

SSH deployment tests involved connecting to fresh Ubuntu 22.04 instances, installing dependencies, deploying a Flask application, and verifying service health. Both models received identical deployment specifications but differed dramatically in execution strategy.

GPT-5.4’s Approach: Demonstrated superior systematic planning by generating a pre-flight checklist before executing SSH commands. When encountering the common `Host key verification failed` error, it correctly identified the issue, added the host to `known_hosts`, and retried without human input. However, it struggled with connection timeout scenarios, sometimes retrying the same failed command rather than diagnosing network-layer issues.

Success rate: 24/30 deployments (80%)

Average token consumption: 18,500 tokens per successful deployment

Mean time to completion: 4.2 minutes

Opus 4.6’s Approach: Showed more conservative behavior, often requesting confirmation before modifying SSH configurations. This reduced autonomous completion rates but prevented several risky operations that GPT-5.4 attempted (like overly permissive file permissions on SSH keys). When it did proceed autonomously, error diagnosis was notably more accurate—correctly identifying firewall issues that GPT-5.4 misattributed to SSH configuration.

Success rate: 19/30 deployments (63%)

Average token consumption: 22,100 tokens per successful deployment

Mean time to completion: 5.7 minutes

Critical Insight: GPT-5.4’s higher completion rate came with increased risk exposure. In production environments prioritizing security over speed, Opus 4.6’s cautious approach may be preferable, though it requires more human-in-the-loop interaction.

Round 2: Automatic Skill Generation and Real-Time Code Debugging

This phase tested each model’s ability to identify bugs in Python codebases, generate fixes, and create reusable “skills” (functions the agent can call in future tasks). Test cases included TypeErrors, import resolution failures, and logic errors in asynchronous code.

GPT-5.4’s Debugging Performance: Excelled at rapid bug identification, often pinpointing issues within the first analysis pass. Its skill generation created well-structured, type-hinted functions with comprehensive docstrings. However, approximately 30% of generated skills had subtle edge-case bugs that only appeared under specific conditions not present in test scenarios.

Bug fix success rate: 22/25 (88%)

Generated skill quality: 70% production-ready without modification

Average debugging session: 12,300 tokens

Opus 4.6’s Debugging Performance: Took a more methodical approach, often requesting to see related code files before proposing fixes. This increased token usage but resulted in more robust solutions. Generated skills included better error handling and logging. The model demonstrated superior understanding of async/await patterns and race conditions.

Bug fix success rate: 21/25 (84%)

Generated skill quality: 85% production-ready without modification

Average debugging session: 16,800 tokens

Critical Insight: While GPT-5.4 debugs faster, Opus 4.6’s generated code requires less downstream maintenance—a crucial factor when these skills become part of your agent’s permanent toolkit.

Round 3: PR Creation, Review, and Autonomous Merge Workflows

The most complex evaluation involved full PR lifecycle management: creating feature branches, committing fixes, writing PR descriptions, responding to review comments, and merging when criteria were met.

GPT-5.4’s PR Workflow: Generated excellent PR descriptions with clear before/after comparisons and testing evidence. Branch naming followed conventions automatically. The model struggled with merge conflict resolution, sometimes creating invalid conflict markers or losing code during manual merge attempts. When given approval to merge, it successfully completed the operation 85% of the time.

Complete PR lifecycle success: 15/20 (75%)

PR description quality: 9.2/10 (human evaluation)

Merge conflict resolution: 60% success rate

Opus 4.6’s PR Workflow: PR descriptions were more verbose but included better context for reviewers. The model demonstrated superior merge conflict resolution, carefully analyzing both sides of conflicts and making intelligent decisions about which changes to preserve. However, it was more hesitant to merge without explicit approval, reducing fully autonomous completion rates.

Complete PR lifecycle success: 13/20 (65%)

PR description quality: 8.8/10 (human evaluation)

Merge conflict resolution: 85% success rate

Critical Insight: GPT-5.4 completes more PR workflows autonomously, while Opus 4.6 handles the complex edge cases (conflicts, force pushes, rebase scenarios) more reliably.

Performance Benchmarks: Token Efficiency, Success Rates, and Latency Analysis

Aggregating across all test categories:

| Metric | GPT-5.4 | Opus 4.6 |

| Overall task completion | 81% | 71% |

| Avg tokens per task | 15,600 | 19,900 |

| Cost per completed task | $0.47 | $0.89 |

| Tasks needing human input | 19% | 29% |

| Critical errors caused | 7 | 2 |

| Response latency (p95) | 8.2s | 11.4s |

*Based on current API pricing as of evaluation date

GPT-5.4 delivers faster, cheaper task completion with higher autonomous success rates. Opus 4.6 provides more reliable outputs with fewer critical failures, at the cost of increased token consumption and human oversight requirements.

Model-Specific Strengths: Context Window Utilization and Task Decomposition

GPT-5.4’s Architectural Advantages:

– Superior short-term task optimization with aggressive execution strategies

– Better performance on well-defined, bounded problems with clear success criteria

– More effective tool use when APIs have comprehensive documentation in context

– Faster decision-making with less deliberation overhead

Opus 4.6’s Architectural Advantages:

– More sophisticated causal reasoning about system interactions

– Better handling of ambiguous requirements and underspecified tasks

– Superior code quality in generated solutions, especially error handling

– More accurate risk assessment before executing potentially destructive operations

Context window utilization revealed interesting patterns. GPT-5.4 maintains task focus more effectively in the 20K-40K token range, while Opus 4.6 shows better long-range dependency tracking beyond 50K tokens—relevant for agents working with large codebases.

Production Recommendations: Choosing Your Agent’s Inference Engine

Choose GPT-5.4 when:

– Task completion speed and cost efficiency are primary concerns

– Your agent operates in sandboxed environments where occasional failures are acceptable

– Workflows are well-documented with clear success/failure criteria

– You have robust error monitoring and can intervene when needed

– You’re processing high volumes of similar tasks where pattern recognition matters

Choose Opus 4.6 when:

– Code quality and reliability outweigh completion speed

– Your agent has access to production systems where errors are costly

– Tasks involve complex reasoning about system interactions

– You need better explainability for agent decisions (regulatory/compliance)

– Generated code becomes part of long-term maintenance surface

Hybrid Approaches: Several production teams are implementing model routing, where task classification determines which model handles each request. Simple deployments and known-good patterns route to GPT-5.4 for speed, while complex debugging and merge conflicts escalate to Opus 4.6. This requires additional orchestration logic but can optimize for both cost and reliability.

The frontier model landscape evolves rapidly—GPT-5.4’s advantages in speed may diminish as Opus 4.6 receives optimization, while Opus’s code quality lead could narrow with GPT-5.4’s next iteration. Regular re-evaluation every 2-3 months ensures your agent stack leverages the current best-in-class capabilities.

For teams building serious autonomous coding infrastructure, the data suggests starting with GPT-5.4 for rapid prototyping and iteration, then transitioning critical workflows to Opus 4.6 as they stabilize and move toward production. Neither model is universally superior—the right choice depends entirely on your specific risk tolerance, budget constraints, and quality requirements.

Frequently Asked Questions

Q: Can I use both GPT-5.4 and Opus 4.6 in the same agent system?

A: Yes, hybrid architectures are increasingly common. Implement a task classifier that routes simple, well-defined operations to GPT-5.4 for speed and cost efficiency, while escalating complex debugging, merge conflicts, and ambiguous requirements to Opus 4.6. This requires additional orchestration logic but optimizes for both performance and reliability.

Q: How much does token consumption actually impact production agent costs?

A: In high-volume agent deployments, token efficiency directly impacts economics. At current pricing, GPT-5.4’s 15,600 average tokens per task versus Opus 4.6’s 19,900 translates to roughly $0.42 cost difference per completed task. For systems processing 1,000+ tasks daily, this compounds to significant monthly cost variance—often $10K-15K for enterprise deployments.

Q: Which model handles SSH connection failures and network timeouts better?

A: Opus 4.6 demonstrates superior diagnostic accuracy for network-layer issues, correctly identifying firewall problems and DNS resolution failures that GPT-5.4 often misattributes to SSH configuration. However, GPT-5.4 recovers faster from common authentication issues like host key verification failures, making it better for standard deployment scenarios.

Q: What safety mechanisms should I implement regardless of model choice?

A: Always implement: (1) Dry-run modes where agents explain planned actions before execution, (2) Explicit approval gates for destructive operations (data deletion, force pushes, production deployments), (3) Token budget limits per task to prevent runaway costs, (4) Comprehensive logging of all tool calls for audit trails, and (5) Sandboxed execution environments for testing agent behavior before production deployment.

Q: How does context window size affect agent performance in these tests?

A: GPT-5.4 maintains better task focus in the 20K-40K token range, making it ideal for bounded problems with moderate context requirements. Opus 4.6 shows superior long-range dependency tracking beyond 50K tokens, crucial when working with large codebases where understanding distant file relationships impacts debugging accuracy. For repositories under 100 files, the difference is minimal.