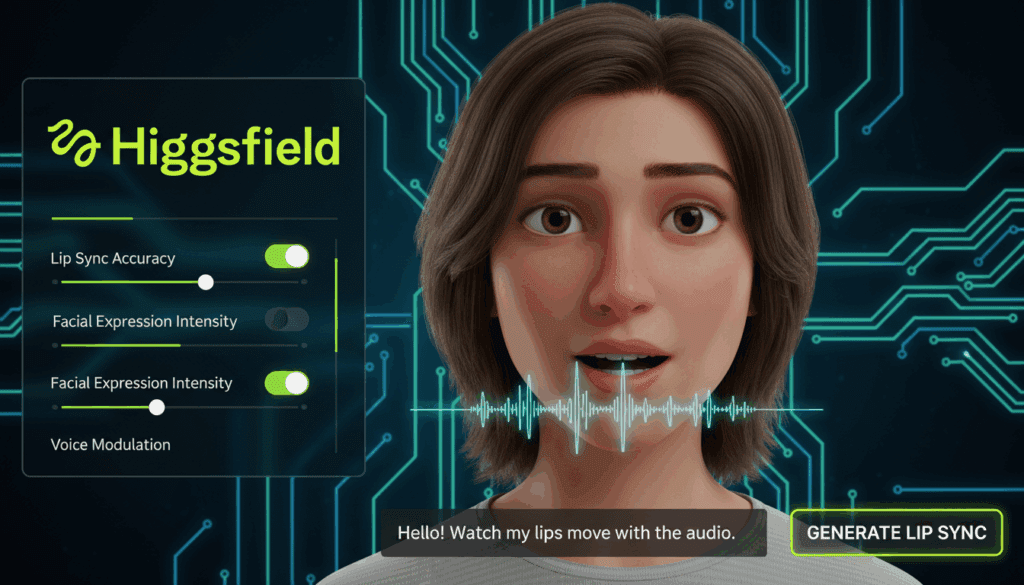

How Creators Get Natural AI Lip Sync in 2025 Without Losing Their Mind

AI lip sync should be simple. Put in a song, upload a face, get a clean performance.

That is not what you get with most tools in 2025. Creators complain about slow rendering, broken timing, flicker, mushy faces and models that fail once the head turns.

On YouTube, tutorials focus on Dzine ai and a few other tools that fix these problems with better timing and cleaner mouth shapes. On Reddit, creators report that some older models like Wan 2.1 or InfiniteTalk fall apart when the subject moves, and masking tricks only make the video inconsistent.

This guide shows how creators get natural lip sync today, where Dzine AI fits, and how to avoid the common traps that break timing.

Why do most AI lip sync tools still look off in 2025?

AI lip sync breaks because most systems guess mouth shapes from audio without tracking jaw rotation or head movement. Once the subject turns, the mouth stops matching the beat.

Creators report three main issues:

- Timing drifts after a few seconds

- Jaw movement collapses during side angles

- Small details in vowels and consonants look flat

Reddit discussions show this clearly. Users trying Wan 2.2 note strong motion for the full body, but weak lip sync. InfiniteTalk stays stuck on the older 2.1 structure with less control. Masking the face does not help because it disconnects the lip layer from the rest of the motion.

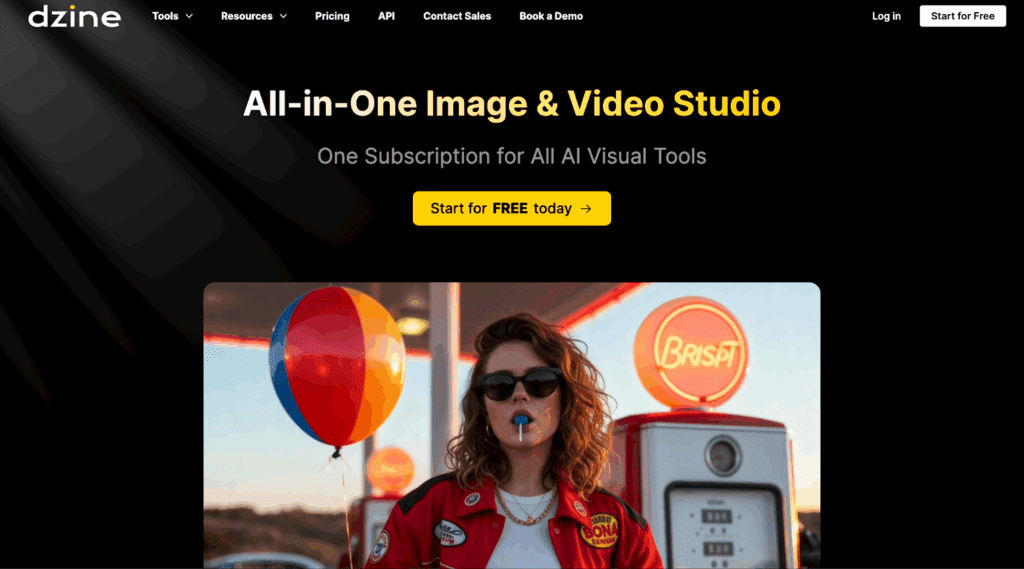

Why do creators use Dzine AI for clean lip sync?

Creators pick Dzine AI because it tracks timing with more stability while keeping head motion intact. YouTube tutorials show creators loading audio, selecting a face and getting a synced result without heavy cleanup.

Key reasons:

- Better frame-to-frame mouth tracking

- Strong timing alignment with fast songs

- Cleaner shapes for vowels, plosives and sibilants

- Less flicker when subjects move

- Simple workflow that avoids patching multiple tools together

Most creators want a single export with no manual fixing. Dzine AI gives that outcome more often than the older models.

How do you set up clean lip sync before using any AI tool?

AI lip sync fails when the source video has weak landmarks. You need the right setup from the start.

Use clear frontal angles

Keep the main face inside the frame. Avoid deep shadows or busy backgrounds.

Match the audio level

Upload audio with stable volume. Sudden spikes reduce accuracy.

Keep head movement natural

Small motion looks real. Big swings break tracking.

Use short takes

Work in short clips. Long sequences lose timing.

Export high resolution

Use 1080p or higher. Low resolution faces confuse the model.

How do creators get natural lip sync with Dzine AI?

Step 1: Load audio and choose the style

Use a clean vocal file. Select a character style that matches the emotion of the song.

Step 2: Upload the face

Use a well-lit face. Dzine AI picks landmarks faster this way.

Step 3: Set motion preferences

Keep head motion light. Let the system control the small details.

Step 4: Preview timing

Check the mouth shape on a short five second preview. Adjust the audio start point if needed.

Step 5: Export the full clip

Render in the highest quality your device supports. Slow devices take more time, but the sync stays solid.

How does Dzine AI compare with other tools for lip sync?

| Tool | Best use case | Main strengths | Key limits |

| Dzine AI | Natural lip sync for music videos | clean timing, low flicker, simple flow | heavy scenes take longer to render |

| Wan 2.2 | Complex full-body movement | strong motion tracking | weak lip sync |

| InfiniteTalk | Simple face-only lip sync | fast previews | dated architecture |

| FaceSwap tools | quick face match | fast setup | poor timing |

| Local V2V models | private or offline work | full control | strong GPUs required |

Why do Users struggle with Wan and InfiniteTalk?

Creators report two main issues:

Timing

Wan 2.2 produces great full-body motion but weak lip sync. InfiniteTalk stays locked to older settings that do not match facial timing from new models.

Inconsistency

Masking only the face creates mismatch when the rest of the head moves. This breaks realism.

GPU pressure

Long clips fail due to VRAM strain. Users with high-end GPUs still get memory errors.

These problems explain why creators shift toward tools like Dzine AI that produce cleaner sync with fewer adjustments.

What workflow should creators use in 2025 for clean lip sync?

Use this simple setup:

Step 1: Prepare the face

Shoot a clean portrait clip with stable lighting.

Step 2: Prepare the audio

Trim the audio. Remove noise. Keep volume stable.

Step 3: Test a short preview

Run a five second sample in Dzine AI. Adjust timing until it looks right.

Step 4: Render short segments

Export in two or three sections. This avoids drift.

Step 5: Edit inside your main editor

Add final effects inside CapCut, Premiere Pro or your main editing tool.

So is Dzine AI the best option for natural lip sync in 2025?

Dzine AI gives the most stable lip sync for music videos and short performance clips. It handles timing well and reduces the small errors that make other models look strange.

Other tools produce great results for motion or full scenes, but they fail on the fine details of the mouth. Dzine AI stays ahead because it keeps these details clean without extra work.

If you want natural timing, simple controls and fewer fixes, Dzine AI is the option that saves the most time.

Conclusion

Dzine AI gives creators clean lip sync without complex steps. It keeps timing tight and reduces the flicker you get in older tools. If you want stable results for short clips, this tool gives you the best balance between control and quality.

Frequently Asked Questions

Does Dzine AI work with long videos?

Short clips work better. Break long content into segments.

Does Dzine AI need a strong GPU?

It works on normal systems, but high resolution runs faster on stronger GPUs.

Can you fix timing after export?

Small edits work in CapCut or Premiere, but clean timing starts with a good preview inside Dzine AI.

Can Dzine AI handle side angles?

Light angles work. Sharp turns break tracking.

Do older models still work for lip sync?

Some do, but most struggle with timing and mouth shapes.