Why Seedance 2.0 Has Hollywood Worried: AI-Generated Celebrity Likeness, Copyright Risk, and the Future of IP Protection

ByteDance just made AI videos of Tom Cruise and Brad Pitt—without permission. That single sentence explains why Seedance 2.0 has Hollywood executives, entertainment lawyers, and VFX supervisors in emergency meetings.

This isn’t just another generative video model. Seedance 2.0 represents a shift from “AI-generated content” to AI-generated identity replication at cinematic fidelity. And for content creators and filmmakers, the legal and ethical implications are no longer theoretical.

Let’s break down what’s happening technically, legally, and strategically.

1. How Seedance 2.0 Recreates Actors’ Likenesses From Simple Text Prompts

At its core, Seedance 2.0 appears to combine large-scale diffusion video modeling with identity-conditioned latent representations. In practical terms, that means:

– The model has learned high-resolution facial embeddings

– It maps those embeddings into a controllable latent space

– It maintains identity consistency across temporal frames

This is not simple face-swapping.

From Prompt to Photorealistic Actor

When a user types:

> “Tom Cruise in a cyberpunk courtroom drama, cinematic lighting, 35mm film grain, dramatic monologue”

Seedance 2.0 likely performs several internal steps:

1. Text Encoding via a transformer-based language model (CLIP-style alignment).

2. Latent Conditioning where identity vectors associated with “Tom Cruise” are activated.

3. Temporal Diffusion Rendering using a video diffusion backbone.

4. Frame-to-Frame Coherence via latent consistency mechanisms.

Modern systems use techniques similar to:

– Latent Consistency Models (LCM) for faster convergence

– Euler a schedulers for sharper detail during denoising

– Cross-frame attention layers for motion continuity

– Seed parity control to stabilize identity across generations

What makes Seedance 2.0 alarming to studios is not that it can generate “a man who looks like Tom Cruise.”

It’s that it can generate Tom Cruise performing new scenes that were never filmed.

And it can do it at near-cinematic quality.

The Difference Between Stylization and Identity Replication

Tools like Runway, Sora, and Kling generate impressive video. But Seedance appears optimized for:

– Face topology preservation

– Micro-expression modeling

– Voice and lip-sync alignment (if multimodal)

– Lighting-adaptive skin rendering

This implies heavy training exposure to public footage.

Even if no one manually uploaded “Tom Cruise dataset.zip,” large-scale scraping of interviews, film clips, red carpets, and press tours would provide enough data to construct a high-fidelity identity manifold.

From a machine learning perspective, this is feature generalization.

From a legal perspective, this may be identity appropriation.

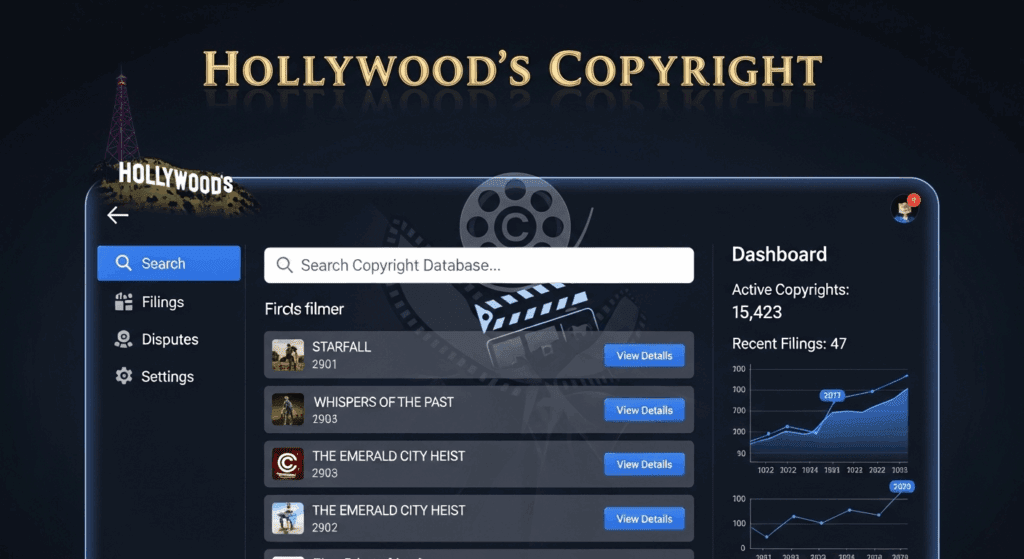

2. Hollywood’s Copyright Claims and ByteDance’s Promised Fixes

The legal battle forming around Seedance 2.0 revolves around three overlapping areas:

1. Copyright infringement

2. Right of publicity

3. Unauthorized derivative works

Let’s separate them technically.

Copyright vs. Likeness

Copyright protects:

– Specific films

– Scripts

– Performances fixed in media

It does not automatically protect a person’s face.

That falls under the right of publicity, which gives individuals control over commercial use of their identity.

If Seedance 2.0 generates:

> “Brad Pitt starring in a new WWII epic directed by Christopher Nolan”

That video may not copy a specific frame from Inglourious Basterds.

But it may still violate:

– Publicity rights

– Trademark protections (if associated branding appears)

– SAG-AFTRA contractual agreements

This is why Hollywood is alarmed.

The threat is not piracy.

It’s synthetic performance replacement.

The Training Data Problem

Studios argue that if Seedance was trained on copyrighted films without licensing agreements, the model itself could be considered a derivative system.

Technically, diffusion models do not store video clips.

They store statistical relationships in high-dimensional latent space.

But here’s the complication:

If a model can reliably reconstruct an actor’s recognizable identity under diverse prompts, that suggests strong memorization of identity-defining features.

Courts are now wrestling with questions like:

– Does statistical learning equal copying?

– Is a generative latent embedding a derivative work?

– Can identity be reverse-engineered from outputs?

ByteDance has reportedly promised:

– Stronger content filters

– Celebrity name suppression

– Watermarking AI-generated outputs

– Opt-out mechanisms for public figures

But filtering names like “Tom Cruise” does not solve:

> “A Mission Impossible star with intense blue eyes performing a stunt speech on a skyscraper.”

Prompt abstraction bypasses keyword filters.

The real technical solution would require:

– Identity embedding detection

– Likeness similarity scoring

– Face-matching prevention layers

– Possibly adversarial training against celebrity replication

That’s computationally expensive—and legally risky to admit is necessary.

3. What This Means for Content Creators and Filmmakers

For independent creators, this is both empowering and dangerous.

You now have tools capable of:

– Generating A-list-level performances

– Simulating studio-quality cinematography

– Creating fictional films starring real actors

But you also face real liability.

The Legal Risk for Creators

If you publish AI-generated content featuring recognizable celebrities without permission, you risk:

– Takedown notices

– Platform demonetization

– Civil lawsuits

– Brand blacklisting

Even if the model generated it automatically.

“AI did it” is not a legal defense.

Especially in commercial contexts.

The Industry Disruption

Filmmakers are watching this closely because Seedance 2.0 challenges three pillars of Hollywood economics:

1. Star power

2. Talent contracts

3. Licensing exclusivity

If audiences can generate their own “new Tom Cruise movie,” studios lose scarcity.

Scarcity is what creates box office leverage.

This could push studios toward:

– Blockchain-verified identity licensing

– AI clauses in all talent contracts

– Union-controlled digital replicas

– Official actor-specific generative models

We may soon see:

> “Licensed Digital Twin of Brad Pitt—Powered by Paramount AI.”

Instead of fighting AI, studios might productize it.

4. Technical Countermeasures on the Horizon

To mitigate abuse, future AI video systems may integrate:

1. Likeness Similarity Thresholding

A facial recognition layer that scores generated frames against known public figures and blocks high matches.

2. Differential Identity Training

Training models to generalize faces without overfitting to specific individuals.

3. Controlled Seed Environments

Limiting seed reproducibility to prevent iterative refinement toward a specific celebrity likeness.

4. Mandatory Watermarking

Cryptographic watermarking embedded at the latent level rather than pixel level.

The problem?

Open-source communities using ComfyUI or custom diffusion pipelines can remove many of these safeguards.

Which means regulation may focus less on model outputs—and more on distribution platforms.

5. The Bigger Shift: From Content Creation to Identity Engineering

Seedance 2.0 signals a transition.

AI video is no longer about generating landscapes or abstract B-roll.

It’s about generating people.

And when AI can convincingly simulate:

– Micro-expressions

– Emotional cadence

– Actor-specific movement patterns

– Character-specific voice tone

The line between performance and synthesis dissolves.

For creators, the lesson is clear:

– Avoid using real celebrity likenesses without permission.

– Focus on original characters.

– Build proprietary identity assets.

– Understand right-of-publicity laws in your region.

For filmmakers, the strategic question is bigger:

Do you fight synthetic identity replication?

Or do you license and control it before someone else does?

Final Takeaway

Hollywood isn’t worried because AI can generate video.

Hollywood is worried because Seedance 2.0 can generate actors.

The legal system hasn’t caught up.

The technical safeguards are incomplete.

The economic disruption is accelerating.

For content creators, this is a pivotal moment.

AI video tools are powerful enough to replace talent—but not powerful enough to replace contracts.

And until identity rights are clearly defined in the age of generative diffusion models, every celebrity prompt is a potential legal gamble.

Frequently Asked Questions

Q: Is it illegal to generate AI videos featuring real celebrities?

A: It can be. While copyright law protects specific works, the right of publicity protects a person’s likeness and identity. Commercial use of AI-generated celebrity videos without permission may expose creators to legal risk.

Q: How does Seedance 2.0 maintain consistent actor identity across frames?

A: It likely uses identity-conditioned latent embeddings combined with temporal diffusion models, cross-frame attention, and latent consistency techniques to preserve facial structure and motion coherence.

Q: Can filters prevent celebrity replication in AI video models?

A: Keyword filters alone are insufficient. Effective prevention would require likeness similarity detection, identity embedding suppression, and possibly adversarial training to reduce overfitting to specific public figures.

Q: What should content creators do to reduce legal risk?

A: Avoid using real celebrity likenesses without explicit permission, focus on original characters, document your workflow, and stay informed about right-of-publicity and AI legislation in your jurisdiction.