AI Video News: The Biggest Stories, Best Models & Tools Dominating 2026

From HappyHorse breaking every leaderboard to how AI now describes, searches, and generates video — your complete briefing on what’s happening in AI video right now.

Create AI Video Ads Free with VidAU →No credit card required · Free tier available

The AI video news cycle in 2026 has been moving faster than most people can track. A model nobody had heard of topped every global benchmark in 48 hours. OpenAI quietly shut down Sora. ByteDance’s Seedance hit a copyright wall. Google’s Veo kept improving. And platforms that let AI describe, search, and find video content are rewriting how creators and businesses work with their video libraries entirely. If you’ve been trying to keep up with what’s actually happening — which models are winning, how AI video search really works, and what the best AI-generated video examples look like right now — this is your complete briefing. We’ll also show you exactly how VidAU’s AI video platform helps creators and brands turn all of this into real, publishable video content without the complexity.

Breaking AI Video News: April 2026 — The Stories That Matter

April 2026 has been one of the most significant months in AI video news today in terms of model releases, market exits, and competitive reshuffling. Here are the four stories every creator and marketer needs to understand.

HappyHorse-1.0 Tops Every Global Leaderboard

Alibaba’s anonymous model appeared on April 7, climbed to #1 on the Artificial Analysis Video Arena leaderboard in both text-to-video (Elo 1,347) and image-to-video (Elo 1,406), and was claimed by Alibaba three days later. The margin over second-place Seedance 2.0 — 74 Elo points — is the largest gap in leaderboard history.

OpenAI Shuts Down Sora

OpenAI discontinued Sora on March 24, 2026. The platform was reportedly generating $2.1 million in lifetime revenue against $15 million per day in inference costs. Sora 2 had launched to Plus and Pro subscribers in January 2026, but was never able to resolve the unit economics. Its exit has accelerated consolidation around Kling, Veo, Runway, and Seedance.

Seedance Pauses Global Rollout

ByteDance’s Seedance 2.0 — which briefly held the #1 leaderboard position — paused its global rollout after disputes with Hollywood studios over training data and copyright. The pause has created a window for HappyHorse and Google Veo to capture users who had been building workflows on Seedance.

AI Video Adoption Hits Inflection Point

According to the 2026 Stanford AI Index, AI adoption is outpacing the personal computer and internet in speed of penetration. In AI video specifically, 78% of marketing teams now use AI-generated video in at least one campaign per quarter, up from 30% in early 2024. Enterprise spending on AI video platforms grew 127% year over year in 2025.

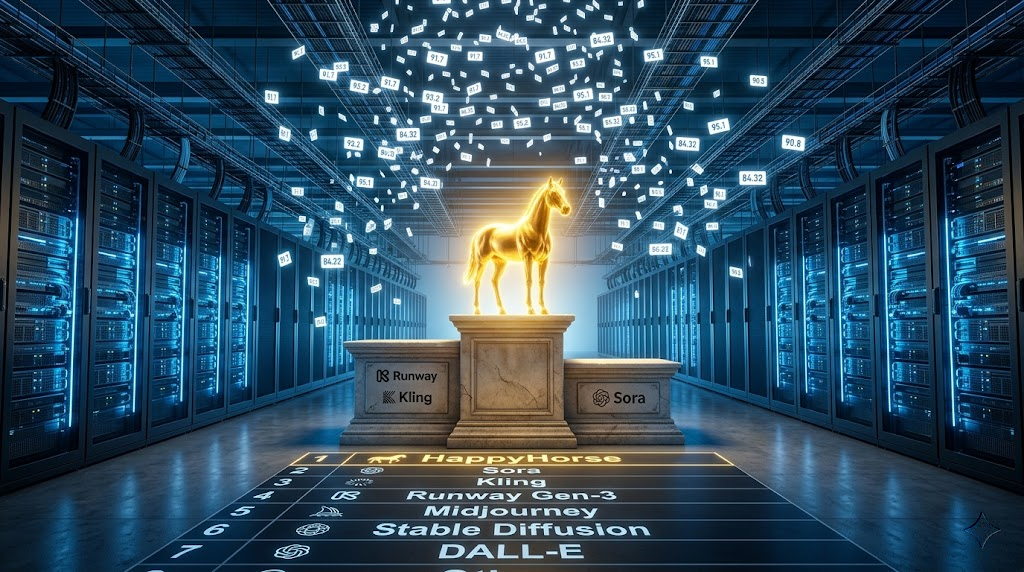

Best AI This Month: The Models Worth Knowing in April 2026

The best AI this month conversation in video is dominated by five models that have carved out genuinely distinct positions. Understanding what each one does well — and where it falls short — is essential for anyone making production decisions in 2026.

| Model | Leaderboard Rank | Key Strength | Best For | Status |

|---|---|---|---|---|

| HappyHorse-1.0 | #1 T2V & I2V | Motion consistency, joint audio-video | Cinematic quality, character animation | API coming soon |

| Seedance 2.0 | #2 T2V | Native 2K, unified audio-video | High-res output, audio-first content | Paused globally |

| Google Veo 3.1 | Top 5 | Audio-native pipeline, cinematic sound | Scene-level audio design, brand video | Live — paid tiers |

| Kling 3.0 | Top 5 | Physics engine, 3-min clips, $0.50/clip | Product demos, long-form, high volume | Live — API available |

| Runway Gen-4.5 | Top 10 | Motion brushes, per-pixel control | Creative control, bespoke animation | Live — subscription |

| Wan 2.6 | Open-source | Apache 2.0 license, free, fast | Self-hosted, high-volume social clips | Live — free |

The practical takeaway from this leaderboard: HappyHorse-1.0 leads on raw quality in blind human tests but isn’t yet publicly accessible via API. For creators and brands who need to produce content today, Kling 3.0 and Google Veo 3.1 represent the best combination of availability, quality, and cost. Wan 2.6’s open-source Apache 2.0 license makes it the obvious choice for developers who want to self-host or integrate AI video generation into their own products without per-clip fees.

HappyHorse’s rise is significant beyond the leaderboard score. It entered the arena completely anonymously, climbed to #1 through nothing but genuine blind user preference, then was revealed as Alibaba’s work. This “stealth benchmark” strategy — drop the model quietly, let it win on merit, then claim it — is a new pattern in AI video news that signals the field has matured enough for quality to speak for itself without PR campaigns. Expect other labs to follow this playbook.

How AI Describes Video — And Why It’s Changing Everything

One of the most underreported stories in creative AI news is how dramatically AI has improved at analyzing and describing video content — not just generating it. AI describe video technology refers to the ability of multimodal AI models to watch a video and produce accurate, detailed written descriptions of what’s happening: who is in the scene, what actions are taking place, what mood is established, what text appears on screen, and what the overall narrative arc is. This capability is reshaping how creators, brands, and enterprises manage their video libraries.

How AI Video Description Actually Works

Modern AI video description systems operate as multimodal models that process video frames, audio tracks, on-screen text, speech transcriptions, and temporal relationships all simultaneously — rather than analyzing each element separately and hoping the outputs align. The result is descriptions that capture not just what’s visible in individual frames but how the content flows: when the scene changes, how the tone shifts, what the key moments are, and what a viewer would take away from the full piece.

Tools like Descript’s AI describe video feature let users upload any video and receive automated captions, scene summaries, and topic descriptions in seconds — content that would previously have required a human editor to watch the entire clip and manually log. For enterprise teams managing hundreds or thousands of video assets, this automation transforms hours of manual cataloging into minutes of processing.

How to Describe a Video Without Viewing It

This is a question that comes up frequently in enterprise video management and content repurposing workflows: how to describe a video without viewing it. The practical answer in 2026 involves three AI-powered approaches that work in combination:

- Automated transcript analysis: AI speech-to-text tools generate a full transcript of all spoken content in the video. An LLM then summarizes the transcript into a description of the video’s topic, key claims, and structure — all without anyone needing to watch the footage. This works well for talking-head content, explainers, interviews, and any video where speech carries most of the meaning.

- Visual keyframe extraction: AI tools sample frames at regular intervals — typically one frame every few seconds — and use vision-language models to describe what’s visible in each. The resulting descriptions are assembled into a scene-by-scene summary that tells you what happens visually throughout the clip.

- Metadata and context inference: For videos where the file metadata, upload context, or surrounding page content is rich, AI can construct reasonable descriptions from contextual signals alone — title, tags, publication date, associated page copy — without analyzing the video itself at all. This is less accurate but useful for very large archives where full analysis isn’t feasible.

One of the highest-ROI applications of AI video description for marketers is finding repurposable clips in existing video libraries. Upload your archive to an AI video description tool, let it auto-generate descriptions and scene-level summaries, then search the results for specific moments — a product shot, a customer testimonial line, a specific location — that you can repurpose into new ads or social content without reshooting. VidAU’s VidRemix feature is purpose-built for exactly this kind of content repurposing workflow.

AI Video Search and AI Video Finder: How to Find Any Video With AI

The AI video search category has matured significantly in 2026. Traditional video search relied entirely on keyword matching against manually entered titles, tags, and descriptions — meaning if a video wasn’t correctly labeled at upload, it was effectively invisible to search. AI video finder tools break this limitation by analyzing the actual content of videos and making them searchable by what’s in them, not just what they’re labeled as.

Modern AI video search platforms use multimodal embedding models that convert every moment in a video into a dense mathematical representation — a vector that captures the visual content, audio, speech, on-screen text, and their temporal relationships simultaneously. When a user searches for “red dress walking down stairs” or “product unboxing in kitchen,” the system finds videos where those things actually happen in the footage, regardless of whether those words appear in the title or tags.

For content creators, this means your entire video library becomes searchable at the scene level. For advertisers, it means being able to search competitors’ ad libraries for specific creative patterns — a particular hook style, a specific product angle, a certain type of testimonial format — to inform your own creative strategy. For brands managing UGC archives, it means being able to find every clip in which your logo appears, your product is held correctly, or your brand colors are on screen, across thousands of hours of content.

AI That Watches YouTube Videos — What It Can and Can’t Do

A growing category of tools focuses specifically on AI that watches YouTube videos and extracts structured information from them. These tools range from simple summarizers — which generate a written overview of a YouTube video’s content from its transcript — to sophisticated research assistants that can watch an entire playlist, cross-reference claims across multiple videos, identify contradictions, and produce structured reports from the synthesized content.

The most capable tools in this category as of April 2026 combine speech-to-text transcription, visual frame analysis, and LLM reasoning to answer questions about video content at a granular level. You can ask “what claims does this video make about product X?” or “what are the three strongest objections raised in this debate?” and receive accurate, citation-level answers that reference specific timestamps. For competitive research, this is transformative — you can analyze a competitor’s entire YouTube channel in the time it would previously have taken to watch one video.

There are important limitations worth understanding. AI video analysis tools remain less accurate on content that relies heavily on visual context not captured in the transcript — physical demonstrations, screen recordings, or visual gags where the meaning is entirely in what you see rather than what’s said. They also sometimes misattribute claims across multiple speakers in multi-person discussions. For research purposes, AI video analysis should be treated as a starting point for investigation rather than a definitive source.

AI Generated Video Examples: What the Best Looks Like in 2026

Understanding what high-quality AI generated video actually looks like in 2026 — as opposed to what it looked like in 2024 — is important context for anyone making creative or production decisions. The gap between the two eras is more significant than most people outside the field appreciate.

What Distinguishes 2026’s Best AI Generated Video Samples

The most striking characteristic of top-tier AI generated video samples in 2026 is physical realism. In 2024, AI video consistently failed on complex physics — water behaved incorrectly, cloth didn’t fold naturally, fire looked rendered rather than real, and human hands were a persistent problem. By April 2026, the leading models handle all of these with enough fidelity that viewers struggle to identify the tell-tale signs. HappyHorse-1.0’s benchmark scores reflect this specifically — human testers in blind comparisons consistently preferred its water surfaces, fabric behavior, and smoke simulations over every competing model.

The second major leap is audio-visual synchronization. In 2024 and most of 2025, AI video either had no audio or had audio generated separately and dubbed on top — which created an uncanny valley effect where dialogue never quite felt naturally present in the scene. Models like HappyHorse-1.0, Veo 3.1, and Seedance 2.0 now generate audio and video simultaneously in a single inference pass, meaning ambient sound, dialogue reverb, and Foley effects are physically calibrated to the scene rather than post-processed onto it.

The third is character consistency across shots. Creating a character that looks the same from different angles, in different lighting, and across multiple cuts — without the face morphing or the clothing changing — was essentially impossible in 2024. In 2026, multi-shot narrative prompting in HappyHorse-1.0 and Runway Gen-4.5 allows creators to maintain character identity across an entire short film’s worth of scenes.

As AI-generated video becomes indistinguishable from real footage in many contexts, platform policies around disclosure are tightening. Meta, TikTok, and YouTube all now require creators to disclose when video content is AI-generated. Several platforms have implemented automated AI content detection. For brand and advertising use cases, always review platform-specific policies before publishing AI-generated video content — and when in doubt, disclose. It protects your account and builds audience trust.

How VidAU Puts the Best AI Video News to Work for Creators and Brands

Following AI video news today is one thing. Actually using the best models to create content that drives real business results is another. The challenge most creators and brands face isn’t awareness of what’s possible — it’s access. The top AI video models are fragmented across different platforms, have different interfaces, different pricing structures, different input requirements, and different strengths. Building a production workflow that uses the right model for each type of content requires navigating all of that complexity manually.

VidAU solves this by consolidating the world’s top AI video models — including Seedance 1.0, Wan 2.5, Kling 2.5, Minimax Hailuo 2.3, and Nano Banana — into a single platform with a unified interface designed specifically for product video ads, UGC-style content, and brand video production. You get the power of the best AI models without needing to manage separate accounts, credits, and workflows for each one.

URL to Video Ads

Paste any product page URL and VidAU’s AI automatically pulls the product images, title, and description — then generates a polished short-form video ad ready for TikTok, Instagram Reels, or YouTube. The fastest path from product listing to live ad. Try URL to Video.

Product Sample to Video

Upload your own product photos and VidAU converts them into dynamic short-form video ads with motion, transitions, captions, and AI voiceover. No filming required — just great product photography. See Product Sample to Video.

UGC Avatars

Create authentic-looking creator-style spokesperson videos featuring AI avatars that present your product, deliver your message, and drive a call to action — at scale, in any language, without hiring talent. Explore UGC Avatars.

VidRemix

Repurpose your best-performing video content across every platform format instantly. Convert landscape into vertical, square into widescreen, long-form into short clips — without re-editing from scratch. Try VidRemix.

Video Enhancer

Upscale AI-generated or smartphone-recorded video to professional quality before publishing. Removes artifacts, sharpens motion, and boosts resolution — so every video looks polished regardless of how it was created. Use Video Enhancer.

Text to Video

Describe the video you want in plain text and VidAU generates it — scenes, transitions, voiceover, captions, and all. Start from a concept rather than existing footage or product images. Explore Text to Video.

VidAU’s AI Models: What’s Under the Hood

VidAU’s platform is powered by a curated selection of the most capable AI video models available, including Seedance 1.0, Wan 2.5, Kling 2.5, Minimax Hailuo 2.3, and Nano Banana. Rather than requiring you to understand the technical tradeoffs between each model, VidAU’s interface guides you toward the right model for your specific use case — product ads, UGC style, cinematic quality, or high-volume batch production — and handles the generation workflow automatically. The result is professional video output without the model expertise or infrastructure management that direct API access requires.

Creative AI News: What the Trends Mean for Video Marketers

Beyond the model rankings, the creative AI news that matters most for video marketers and content creators in 2026 is about workflow transformation, not just tool capabilities. The most significant shift isn’t that AI can generate better video — it’s that the entire creative production loop has accelerated to the point where the bottleneck is no longer production, it’s creative decision-making.

Marketing teams that previously spent two weeks on a single video campaign — brief, script, shoot, edit, review, publish — are now generating 10 to 20 complete video variations in a single afternoon, A/B testing them against real audiences within 48 hours, and scaling the winner the same week. The 2026 AI Index from Stanford found that people are adopting AI faster than they adopted the personal computer or the internet. In video marketing specifically, this acceleration means that teams who haven’t integrated AI video generation into their workflows by mid-2026 are operating at a structural competitive disadvantage — not because their content is lower quality, but because their output volume and iteration speed can’t match teams who have.

The other significant creative AI news is the convergence of AI video generation with AI video analysis. The ability to generate video content and simultaneously analyze competitor video content — using AI video search to identify what’s working in your industry, then using AI generation to produce content that incorporates those learnings — creates a feedback loop that manual creative teams can’t replicate at the same speed or cost.

Frequently Asked Questions About AI Video in 2026

What is the best AI video model right now in April 2026?

Based on blind user voting on the Artificial Analysis Video Arena, HappyHorse-1.0 (Alibaba) currently leads both text-to-video and image-to-video leaderboards with Elo scores of 1,347 and 1,406 respectively — the largest margins ever recorded. However, HappyHorse’s API is not yet publicly available. For production use today, Kling 3.0 and Google Veo 3.1 offer the best combination of quality, availability, and pricing. VidAU’s platform gives you access to multiple top models without managing separate accounts for each.

How does AI describe video content automatically?

AI video description uses multimodal models that simultaneously process visual frames, audio tracks, on-screen text, and speech transcriptions from a video. The model produces written descriptions of what’s happening in each scene, the overall narrative arc, key visual elements, and any spoken content — without a human needing to watch the video. Tools like Descript offer this capability directly. The technology is particularly useful for cataloging large video archives, generating metadata for SEO, and creating accessibility descriptions for video content.

How can I describe a video without viewing it?

There are three main AI-powered approaches. First, automated transcript analysis — AI speech-to-text generates a full transcript, which an LLM then summarizes into a video description. This works well for any content where speech carries the primary meaning. Second, visual keyframe extraction — AI samples frames throughout the video and uses vision-language models to describe each one, assembling a scene-by-scene summary. Third, contextual metadata inference — for videos with rich surrounding context (title, tags, page copy), AI can infer a description from that context alone. Most enterprise video platforms now offer some combination of these approaches natively.

What happened to Sora in 2026?

OpenAI discontinued Sora on March 24, 2026. The platform had launched Sora 2 to Plus and Pro subscribers in January 2026, but the unit economics were unsustainable — reportedly $15 million per day in inference costs against $2.1 million in total lifetime revenue. OpenAI refocused resources on its core LLM and multimodal products. The shutdown accelerated consolidation in the AI video market around Kling, Google Veo, Runway, and HappyHorse as the primary production-grade alternatives.

How does AI video search work?

AI video search uses multimodal embedding models that convert every moment in a video into a dense vector representation — capturing visual content, audio, speech, and on-screen text simultaneously. When you search for something (“product unboxing,” “red dress on stairs,” “smiling customer”), the system finds videos where those elements actually appear in the footage — regardless of whether those words appear in the title or tags. This makes entire video libraries searchable at the scene level by content, not just by manually applied labels.

What can AI do when it watches YouTube videos?

AI tools that analyze YouTube content can generate full transcripts, summarize video content at any level of detail, answer specific questions about claims made in the video, identify key timestamps for specific topics, cross-reference information across multiple videos, and extract structured data (product mentions, speaker claims, key arguments) from video content. The most capable tools combine speech-to-text, visual frame analysis, and LLM reasoning. They work best on content where speech carries primary meaning and are less accurate for content that relies heavily on visual demonstrations without verbal explanation.

How is AI-generated video quality different in 2026 vs 2024?

The difference is substantial across three key dimensions. Physical realism: in 2024, AI video consistently failed on water, cloth, fire, and hands — in 2026, top models handle all of these with convincing fidelity. Audio-visual synchronization: 2024 models either had no audio or dubbed it on separately; in 2026, HappyHorse, Veo, and Seedance generate audio and video simultaneously in one inference pass, creating natural in-scene sound. Character consistency: maintaining a character’s appearance across multiple shots was essentially impossible in 2024; in 2026, multi-shot narrative prompting allows coherent character identity across full scenes.

How can VidAU help me use AI video for my business?

VidAU consolidates the world’s top AI video models — including Seedance, Wan, Kling, Hailuo, and Nano Banana — into a single platform designed for product video ads, UGC-style content, and brand video production. Key tools include URL to Video Ads (paste a product URL, get a video ad), Product Sample to Video (upload photos, get a video), UGC Avatars (AI spokesperson videos at scale), VidRemix (repurpose content across platforms), and Text to Video (generate from a prompt). You can start free at vidau.ai.

What AI Video News Tells Us About Where This Is All Going

The AI video news of April 2026 tells a clear story: the generative AI video space has crossed from experimental to production-grade in under two years, and the pace of change is still accelerating. Models that lead the benchmarks in April may be in fifth place by July. Platforms that seemed dominant — Sora — can exit the market in a matter of months when the economics don’t work. And entirely unknown models can appear from nowhere, win every blind comparison on merit, and reshape the competitive landscape before anyone has written a press release.

For creators and brands, the practical conclusion is simple: the competitive advantage no longer belongs to whoever has access to the best model. It belongs to whoever can move fastest — generate more variations, test more creative angles, iterate more quickly, and publish more consistently. The models are the raw material. The workflow is the competitive moat. And tools like VidAU — which put multiple top models in one place with production-ready workflows for video ads, UGC content, and brand video — are what let teams operate at the speed this market now requires.

Stay current with the latest developments at the VidAU blog, explore the full AI model lineup at VidAU AI Video, and check VidAU’s pricing plans to find the tier that matches your production volume.