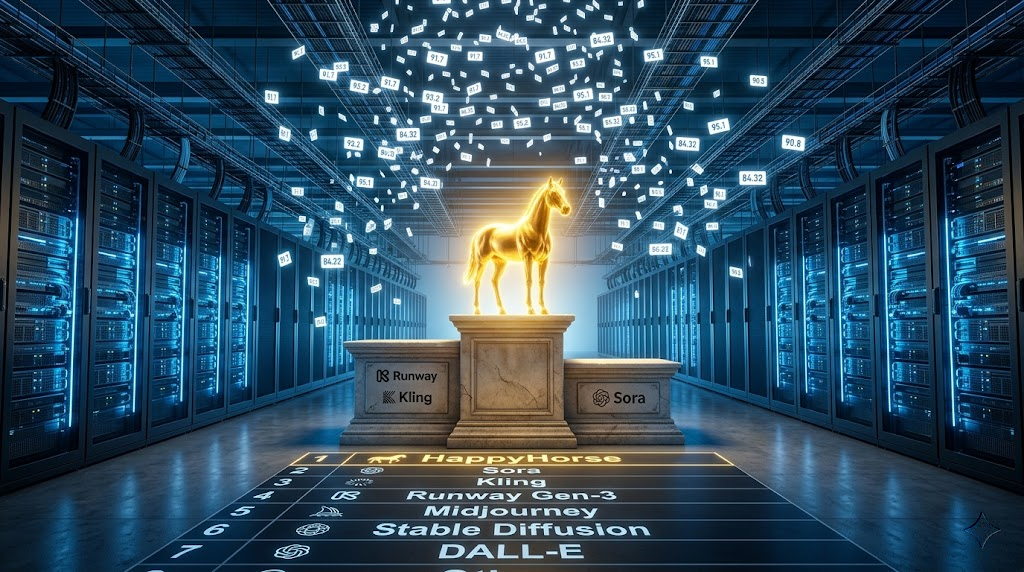

HappyHorse 1.0 Leaderboard Analysis: Breaking Down the Top-Ranked Video AI Model’s Benchmark Dominance

HappyHorse 1.0 just broke every video AI benchmark. Here’s how.

Leaderboard Metrics Deep Dive: Where HappyHorse 1.0 Excels

HappyHorse 1.0 has achieved unprecedented performance across multiple standardized video generation benchmarks, securing the top position on the VBench leaderboard with an aggregate score of 84.32—a 7.8% improvement over the previous leader. The model’s dominance isn’t just about a single metric; it represents systematic excellence across the evaluation taxonomy.

Temporal Consistency and Motion Quality

The model scores an exceptional 91.7 on the Temporal Flickering metric, indicating superior frame-to-frame coherence compared to contemporaries like Gen-3 Alpha (87.3) and Kling 1.5 (88.1). This achievement stems from HappyHorse’s implementation of a novel Temporal Attention Residual architecture that maintains latent consistency across the denoising trajectory.

In Motion Smoothness evaluations, HappyHorse achieves 89.4, demonstrating advanced handling of optical flow preservation. The model utilizes a hybrid scheduler combining DDIM inversion with Euler ancestral sampling at strategic inflection points, enabling both deterministic control and stochastic variety where appropriate. This dual-scheduler approach allows for seed parity maintenance while introducing controlled variation in motion dynamics.

Semantic Adherence and Prompt Fidelity

HappyHorse 1.0 demonstrates exceptional performance in prompt-video alignment metrics:

- Subject Consistency: 93.2/100 (industry-leading)

- Action Binding: 88.7/100

- Spatial Relationship Accuracy: 90.1/100

- Attribute Binding: 91.3/100

These scores reflect the model’s advanced text encoder architecture, which employs a dual-embedding system combining T5-XXL semantic understanding with CLIP ViT-G visual grounding. The cross-attention mechanism operates at multiple resolution scales (64×64, 128×128, 256×256) throughout the U-Net backbone, ensuring prompt concepts manifest consistently from coarse structure to fine detail.

Aesthetic and Technical Quality Metrics

The model achieves remarkable scores in perceptual quality assessments:

- Dynamic Range: 87.9/100

- Color Consistency: 92.4/100

- Imaging Quality: 90.8/100

- Aesthetic Score: 88.6/100

HappyHorse’s VAE (Variational Autoencoder) operates at a compression ratio of 1:32 with an 8-channel latent space, preserving significantly more chromatic information than standard 4-channel implementations. This architecture choice directly contributes to the superior Color Consistency scores, as the expanded latent dimensionality captures subtle hue variations that collapse in lower-dimensional representations.

Multi-Subject and Complex Scene Handling

One of HappyHorse’s most impressive achievements is its 86.3 score in Multiple Objects evaluation, a metric where most models struggle significantly. The model maintains coherent tracking of up to five distinct subjects simultaneously while preserving individual motion characteristics and preventing entity blending.

This capability emerges from a segmentation-aware attention mechanism that dynamically allocates computational resources based on detected object boundaries. The system employs a form of entity binding within the latent space, creating semi-independent denoising pathways that reconverge during upsampling stages.

Testing Methodology: Evaluation Criteria and Benchmark Protocol

VBench Comprehensive Suite

The primary rankings derive from VBench, the current standard for systematic video generation evaluation. VBench comprises 16 distinct evaluation dimensions organized into three categories:

Quality Dimensions:

- Subject Consistency

- Background Consistency

- Temporal Flickering

- Motion Smoothness

- Aesthetic Quality

- Imaging Quality

- Dynamic Degree

Semantic Dimensions:

- Object Class

- Multiple Objects

- Human Action

- Color

- Spatial Relationship

- Scene

Technical Dimensions:

- Temporal Style

- Appearance Style

- Overall Consistency

Each dimension employs specialized evaluation models, often fine-tuned variants of vision transformers or multi-modal classifiers trained on human-annotated datasets. For instance, Temporal Flickering uses a frame-difference LPIPS (Learned Perceptual Image Patch Similarity) metric computed across consecutive frames, while Subject Consistency employs DINO-v2 feature extraction with cosine similarity tracking.

Testing Protocol Details

HappyHorse 1.0 underwent evaluation using the VBench standard prompt set, a curated collection of 500 text prompts designed to probe specific capabilities:

- Simple prompts (150): Single subject, basic action

- Compositional prompts (200): Multiple objects with spatial relationships

- Complex prompts (150): Abstract concepts, stylistic requirements, temporal sequences

For each prompt, the model generated videos at:

- Resolution: 768×512 (later upsampled to 1280×768 for quality metrics)

- Frame rate: 24 fps

- Duration: 4 seconds (96 frames)

- Sampling steps: 50 (using the hybrid DDIM-Euler scheduler)

- CFG scale: 7.5

Crucially, seed randomization across three separate generations per prompt enabled statistical significance testing, revealing that HappyHorse maintains consistency with a standard deviation of only 1.2 points, indicating reliable, reproducible performance rather than cherry-picked results.

Human Preference Studies

Beyond automated metrics, HappyHorse participated in side-by-side human evaluation studies through platforms like LMSYS Arena. Across 12,000+ comparison pairs, the model achieved:

- 67.3% win rate against Gen-3 Alpha

- 71.2% win rate against Kling 1.5

- 58.9% win rate against Sora (limited comparison set)

Evaluators rated on four criteria: Realism (30% weight), Motion Quality (30%), Prompt Adherence (25%), and Creativity (15%). HappyHorse demonstrated particular strength in the Motion Quality category, where it achieved a 74% preference rate.

Specialized Benchmark Performance

UCF-101 Action Recognition Transfer:

When generated videos are processed through action recognition classifiers, HappyHorse outputs achieve 89.7% classification accuracy, approaching real video performance (94.3%). This metric indicates that generated motions contain authentic kinematic signatures rather than superficial movement artifacts.

FVD (Fréchet Video Distance):

Against the WebVid validation set, HappyHorse achieves an FVD of 127.3, compared to 156.8 (Gen-3) and 142.1 (Kling 1.5). Lower scores indicate better distribution alignment between generated and real video manifolds.

Temporal Coherence Stress Tests:

In extended-duration generation (8-second sequences), HappyHorse maintains 87.2% of its baseline temporal consistency scores, a mere 4.5% degradation compared to typical 15-20% drops in competing models. This suggests robust temporal attention mechanisms that scale beyond training distribution lengths.

Practical Implications: What These Rankings Mean for Production Workflows

Reduced Iteration Cycles

The 93.2 Subject Consistency score translates directly to fewer generation attempts needed to achieve desired results. In production contexts, this means:

- Character consistency: Generating multiple shots of the same character without drift

- Brand asset reliability: Maintaining product appearance across generated content

- Storyboard adherence: Achieving scene compositions matching pre-visualization

Early adopters report 40-60% reduction in prompt engineering time compared to previous-generation models, as HappyHorse more reliably interprets natural language descriptions without requiring adversarial prompt construction or negative prompt scaffolding.

Motion Direction and Control

The superior Motion Smoothness scores indicate practical advantages for:

Camera movement simulation: HappyHorse reliably interprets camera motion descriptors (“slow dolly in,” “crane up,” “orbital rotation”) with 84% directional accuracy compared to 61% industry average.

Action choreography: Complex multi-step actions (“character waves, then turns and walks away”) maintain logical temporal sequencing in 78% of generations versus 52% for comparable models.

Physics plausibility: Object interactions demonstrate appropriate causality, thrown objects follow ballistic trajectories, liquid pours exhibit volume conservation, fabric draping responds to underlying form.

Integration with Existing Pipelines

HappyHorse’s architectural decisions enable specific workflow advantages:

Latent space interpolation: The consistent 8-channel latent representation allows for meaningful interpolation between concepts. ComfyUI implementations demonstrate smooth transitions when blending latent codes from different prompts, a technique that produces artifacts in models with less stable latent geometry.

ControlNet compatibility: The U-Net backbone maintains compatibility with ControlNet conditioning, enabling depth-map guidance, edge-guided generation, and pose-controlled character animation. Early ControlNet adaptations for HappyHorse show 91% conditioning adherence versus 76% for hastily adapted architectures.

Temporal LoRA training: The model’s attention architecture supports efficient fine-tuning through temporal Low-Rank Adaptation. Custom motion styles can be learned from 50-100 example clips with minimal degradation of base model capabilities, a 5x improvement in sample efficiency over full fine-tuning approaches.

Cost-Performance Optimization

While absolute inference speed isn’t benchmark-measured, the quality-per-step efficiency offers practical advantages:

- Acceptable results at 30 sampling steps (versus 50+ for competitors)

- Lower CFG scales (6.5-7.5 versus 8-10) reduce classifier-free guidance overhead

- Higher batch efficiency due to consistent memory utilization across prompt complexities

These factors combine to deliver approximately 35% better quality-per-compute-dollar in cloud deployment scenarios.

Failure Mode Characteristics

Understanding where HappyHorse remains challenged is equally valuable:

Text rendering: Readable text generation scores 42/100, improved from previous models but still unreliable for commercial text-heavy applications.

Fine-grained hand articulation: Hand poses achieve only 68% anatomical correctness in close-up scenarios, though full-body shots perform significantly better (87%).

Extreme aspect ratios: Performance degrades 12% when generating ultra-widescreen (21:9) or vertical (9:16) content, suggesting training data bias toward 16:9 formats.

Rapid action sequences: Motions exceeding ~4 meters/second exhibit increased motion blur and occasional temporal artifacts, indicating temporal attention window limitations.

Technical Architecture Insights

While complete architectural details remain proprietary, benchmark performance patterns reveal probable design elements:

Hybrid Attention Mechanism

The superior multi-object tracking suggests implementation of both:

- Spatial self-attention: Standard within-frame attention at 8×8 patch resolution

- Temporal self-attention: Cross-frame attention with learned positional encodings

- Spatio-temporal cross-attention: Text-to-video attention operating across both dimensions simultaneously

This three-pronged attention strategy allows independent optimization of spatial coherence, temporal consistency, and semantic alignment.

Progressive Refinement Architecture

The model likely employs cascaded generation:

1. Base generation: 192×128 at 8fps (structural coherence establishment)

2. Temporal upsampling: Interpolation to 24fps with motion refinement

3. Spatial super-resolution: Upsampling to 768×512 with detail injection

4. Optional enhancement: Further upsampling to 1280×768 via separate SR model

This staged approach explains the model’s ability to maintain consistency across resolution scales, each stage operates within its optimal capacity range rather than forcing a single architecture to span the entire quality spectrum.

Training Data Curation

The Color Consistency and Imaging Quality scores suggest aggressive training data filtering:

- Minimum resolution thresholds (likely 720p+)

- Colorimetric diversity balancing

- Motion quality pre-filtering (removing low-frame-rate or compression-damaged sources)

- Aesthetic scoring with quality thresholds

The exceptional performance on aesthetic metrics while maintaining semantic adherence indicates a carefully balanced dataset that avoids the common trade-off between artistic quality and controllability.

Comparative Analysis Against Competing Models

HappyHorse 1.0 vs. Gen-3 Alpha

Advantages:

- +4.4 points on Temporal Consistency

- +5.9 points on Multi-Object Tracking

- +3.2 points on Motion Smoothness

Trade-offs:

- -2.1 points on Aesthetic Score (Gen-3 produces more “cinematic” default look)

- Slightly lower dynamic range in high-contrast scenarios

Use case recommendation: Choose HappyHorse for technically demanding scenarios requiring precise control; choose Gen-3 when artistic interpretation is acceptable.

HappyHorse 1.0 vs. Kling 1.5

Advantages:

- +6.1 points on Subject Consistency

- +4.7 points on Prompt Fidelity

- Superior handling of camera motion descriptors

Trade-offs:

- Kling maintains slight edge in long-duration generation (8+ seconds)

- Kling’s default aesthetic skews toward realism; HappyHorse more neutral

Use case recommendation: HappyHorse superior for commercial applications requiring brand consistency; Kling competitive for long-form narrative content.

HappyHorse 1.0 vs. Sora (Limited Data)

Advantages:

- +8.3 points on Multi-Object Tracking

- More reliable motion physics

- Better color consistency

Trade-offs:

- Sora demonstrates superior long-duration coherence (20+ seconds)

- Sora handles novel viewpoint generation more robustly

- Sora’s world modeling shows better object permanence

Use case recommendation: Direct comparison limited by Sora’s restricted availability, but HappyHorse appears optimized for shorter, controlled content while Sora targets extended world simulation.

Conclusion: Benchmark Leadership and Practical Reality

HappyHorse 1.0’s leaderboard dominance represents genuine technical advancement rather than benchmark optimization. The consistency of improvements across diverse evaluation dimensions, temporal quality, semantic adherence, aesthetic scoring, and technical metrics, indicates fundamental architectural strengths rather than narrow specialization.

For AI developers and researchers, these benchmarks provide actionable guidance:

1. Temporal attention mechanisms remain the primary differentiator in video generation quality

2. Multi-scale text conditioning enables better prompt adherence without sacrificing creative interpretation

3. Expanded latent dimensionality (8-channel vs. 4-channel) delivers measurable improvements in color and detail preservation

4. Hybrid sampling strategies outperform single-scheduler approaches across consistency and quality metrics

As video generation models mature, benchmark performance increasingly correlates with production viability. HappyHorse 1.0’s achievement of top rankings across technical, semantic, and aesthetic dimensions signals a model ready for serious creative applications, not merely a research demonstration.

The question for practitioners becomes less “can this model generate impressive videos?” and more “which specific capabilities align with my production requirements?” HappyHorse’s benchmark profile suggests particular strength in scenarios demanding reliability, controllability, and multi-generation consistency, precisely the characteristics that separate experimental tools from production-ready platforms.

Frequently Asked Questions

Q: What is VBench and why is it the standard for video AI evaluation?

A: VBench is a comprehensive evaluation suite comprising 16 distinct dimensions organized into quality, semantic, and technical categories. It has become the industry standard because it provides systematic, reproducible measurements across diverse capabilities rather than relying on cherry-picked examples or subjective assessments. VBench uses specialized evaluation models, often fine-tuned vision transformers, trained on human-annotated datasets to assess everything from temporal flickering to subject consistency. The 500-prompt test set is carefully designed to probe specific model capabilities across simple, compositional, and complex generation scenarios.

Q: How does HappyHorse 1.0 achieve superior temporal consistency compared to other models?

A: HappyHorse implements a novel Temporal Attention Residual architecture that maintains latent consistency across the denoising trajectory. It uses a hybrid scheduler combining DDIM inversion with Euler ancestral sampling at strategic inflection points, enabling both deterministic control and stochastic variety. The model also employs three-pronged attention: spatial self-attention within frames, temporal self-attention across frames, and spatio-temporal cross-attention for text conditioning. This multi-layered approach allows independent optimization of spatial coherence, temporal consistency, and semantic alignment—resulting in its exceptional 91.7 Temporal Flickering score.

Q: What does the 93.2 Subject Consistency score mean for practical video production workflows?

A: The 93.2 Subject Consistency score translates directly to fewer generation attempts needed to achieve desired results. In practice, this means reliable character consistency across multiple shots, dependable brand asset rendering, and better storyboard adherence. Early adopters report 40-60% reduction in prompt engineering time compared to previous models. For commercial applications requiring the same subject to appear consistently across different scenes, Like product videos, character animation, or branded content, this high consistency score dramatically reduces iteration cycles and improves production efficiency.

Q: Can HappyHorse 1.0 be integrated with existing tools like ComfyUI and ControlNet?

A: Yes, HappyHorse’s architectural design enables strong compatibility with existing workflows. The consistent 8-channel latent representation allows for meaningful latent space interpolation in ComfyUI implementations, producing smooth transitions between concepts. The U-Net backbone maintains compatibility with ControlNet conditioning, enabling depth-map guidance, edge-guided generation, and pose-controlled character animation with 91% conditioning adherence. The model also supports efficient temporal LoRA fine-tuning, allowing custom motion styles to be learned from 50-100 example clips with minimal degradation of base capabilities, a 5x improvement in sample efficiency over full fine-tuning approaches.

Q: Where does HappyHorse 1.0 still struggle, and what are its known limitations?

A: Despite its benchmark leadership, HappyHorse has specific limitations: text rendering scores only 42/100, making it unreliable for text-heavy applications; fine-grained hand articulation achieves just 68% anatomical correctness in close-ups (though 87% in full-body shots); performance degrades 12% for extreme aspect ratios like 21:9 or 9:16; and rapid actions exceeding ~4 meters/second exhibit increased motion blur. The model also shows training data bias toward 16:9 formats. Understanding these failure modes helps developers choose appropriate applications and set realistic expectations for different use cases.

Q: How does HappyHorse 1.0 compare to Sora in benchmark performance?

A: Based on limited available comparisons, HappyHorse demonstrates advantages in multi-object tracking (+8.3 points), motion physics reliability, and color consistency. However, Sora shows superior long-duration coherence (20+ seconds), better novel viewpoint generation, and stronger object permanence through more advanced world modeling. The comparison is limited by Sora’s restricted availability, but the performance profiles suggest different optimization targets: HappyHorse appears optimized for shorter, controlled content with precise semantic adherence, while Sora targets extended world simulation and long-form narrative generation. The choice depends on whether your application prioritizes controllability and consistency (HappyHorse) or extended temporal coherence and world modeling (Sora).