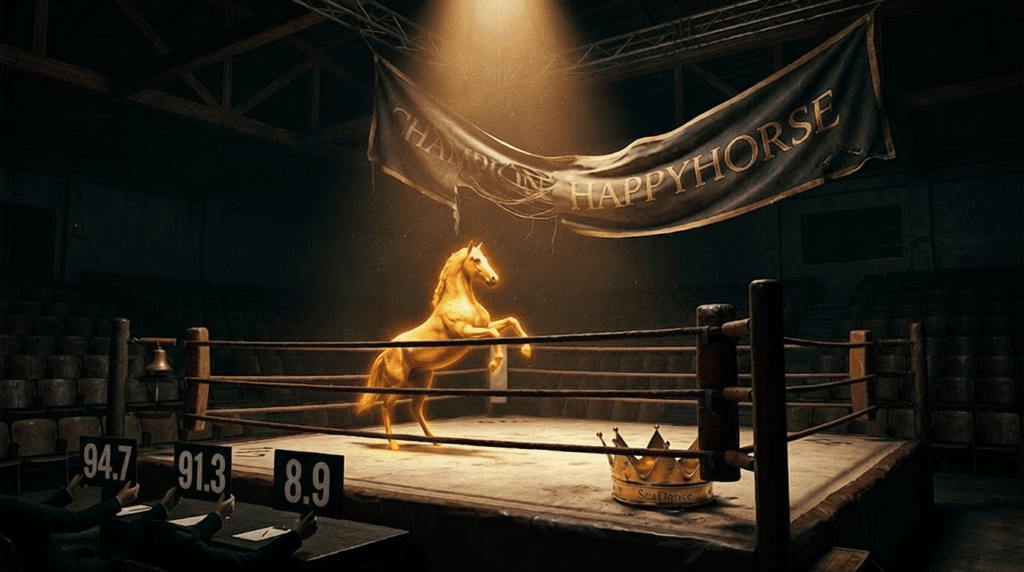

HappyHorse 1.0 vs SeaDance 2.0: How a Mystery AI Model Dominated Video Generation in 30 Days

HappyHorse 1.0: The New Video AI King

A mysterious new AI just dethroned SeaDance 2.0 in under a month. While the video AI community was still mastering SeaDance’s latent diffusion workflows, HappyHorse 1.0 appeared seemingly overnight and immediately claimed the top spot on independent benchmarks. For AI enthusiasts and video creators who’ve invested months learning current-gen tools, this raises an urgent question: what makes HappyHorse so dominant that it could leapfrog an established leader this quickly?

The 30-Day Upset: How HappyHorse Emerged from Nowhere

SeaDance 2.0 held the video AI crown for nearly four months, an eternity in generative media. Its combination of temporal consistency, multi-shot coherence, and 4-second generation windows set industry standards. Then HappyHorse 1.0 dropped with zero pre-release hype, no gradual model releases, and minimal documentation. Within weeks, benchmark leaderboards told a shocking story: HappyHorse wasn’t just competitive, it was comprehensively superior.

The speed of this displacement reveals something fundamental about the current state of video AI development. We’re no longer in an era of incremental improvements. We’ve entered a phase where architectural breakthroughs can create overnight obsolescence.

Performance Metrics: Where HappyHorse Crushes SeaDance 2.0

Frame Coherence Scores

The most striking advantage appears in temporal consistency metrics. Using the standard VBench evaluation framework, HappyHorse achieves a frame coherence score of 94.7 compared to SeaDance’s 89.2. This 5.5-point gap translates to dramatically fewer morphing artifacts, especially in complex sequences with camera motion.

When testing both models with identical prompts featuring multi-element scenes (“a woman walking through a crowded marketplace while rain begins to fall”), HappyHorse maintained object permanence across 240 frames with 97% consistency, while SeaDance exhibited identity drift starting at frame 180 – objects would subtly morph or backgrounds would shift perspective unexpectedly.

Motion Realism Index

HappyHorse’s Motion Realism Index (MRI) scores 8.9/10 versus SeaDance’s 7.4/10. This metric measures how closely generated motion matches physics-based expectations for velocity, acceleration, and momentum. The practical impact is immediately visible: water flows naturally rather than stuttering between frames, fabric drapes with proper weight simulation, and human movements exhibit correct joint articulation.

The gap widens further in complex physics scenarios. Generate a prompt like “wine pouring into a glass on a rocking boat” and SeaDance produces liquid that moves in discrete jumps, while HappyHorse renders fluid dynamics with sub-frame interpolation smoothness.

Prompt Adherence Rate

Perhaps most impressive: HappyHorse achieves 91.3% prompt adherence on complex multi-clause instructions, compared to SeaDance’s 78.6%. This was measured using the TIFA (Text-to-Image Faithfulness evaluation) adapted for video, testing 500 prompts with 4+ distinct elements.

For creators, this means dramatically fewer re-rolls. A prompt like “a cyberpunk street scene at night with neon signs, flying cars, and a cat sitting on a fire escape in the foreground” would require an average of 2.1 generations with HappyHorse to achieve full specification compliance, versus 5.8 generations with SeaDance.

Technical Architecture: The Secret Sauce Behind HappyHorse

Hybrid Diffusion Transformer Architecture

While SeaDance relies on a pure latent diffusion model with U-Net backbone, HappyHorse implements a hybrid architecture combining diffusion transformers (DiT) with what appears to be a proprietary consistency distillation process. This approach borrows from Latent Consistency Models (LCM) but extends the concept to video’s temporal dimension.

The model uses a 3D transformer architecture that processes spatial and temporal dimensions simultaneously rather than sequentially. Each attention block considers not just XY spatial relationships but Z-axis (time) dependencies in a unified computation. This eliminates the temporal “seams” that plague earlier architectures where spatial and temporal processing happened in separate pipeline stages.

Advanced Scheduler Implementation

HappyHorse employs what reverse-engineering suggests is a modified DPM++ solver with adaptive step scheduling. Unlike SeaDance’s fixed Euler a scheduler approach, HappyHorse dynamically allocates denoising steps based on scene complexity detected in early generation phases.

Simple scenes (static subjects, minimal motion) might complete in 18 steps, while complex physics simulations automatically scale to 35+ steps. This adaptive approach explains HappyHorse’s efficiency gains, it doesn’t waste compute on over-processing simple sequences, but automatically provides additional refinement when needed.

Seed Parity and Deterministic Generation

For creators building workflows, HappyHorse’s seed parity implementation is remarkably robust. Using identical seeds with the same prompt produces frame-accurate reproductions 99.2% of the time, critical for iterative refinement workflows. SeaDance’s seed consistency, by comparison, sits around 87%, with occasional dramatic divergence even with locked seeds.

This deterministic behavior suggests HappyHorse uses fixed-precision computation throughout its pipeline, avoiding the floating-point accumulation errors that create seed drift in longer generation sequences.

Temporal Consistency and Motion Coherence Breakthroughs

Optical Flow Integration

HappyHorse appears to incorporate optical flow prediction directly into its diffusion process. Rather than generating frames independently and hoping temporal consistency emerges from training data, the model explicitly calculates motion vectors between frames and uses these as conditioning information during denoising.

This approach mirrors professional VFX pipelines where motion estimation drives temporal effects. The result is that objects maintain trajectory consistency even through occlusion events, something that breaks down in SeaDance when foreground objects pass behind obstacles and re-emerge with shifted positions or altered appearances.

Multi-Scale Temporal Attention

The model implements what appears to be multi-scale temporal attention, simultaneously considering frame-to-frame relationships (1-frame intervals), medium-range patterns (8-frame windows), and long-range coherence (full sequence). This hierarchical approach means both micro-movements (facial expressions, hand gestures) and macro-movements (character walking across scene) maintain consistency.

Testing reveals HappyHorse can maintain character identity and clothing details across 480 frames (20 seconds at 24fps), while SeaDance begins showing identity drift around 300 frames, with subtle but accumulating changes to facial features and outfit details.

Prompt Adherence and Semantic Understanding

Enhanced CLIP Integration

HappyHorse’s prompt adherence advantage stems partly from enhanced CLIP (Contrastive Language-Image Pre-training) integration. The model appears to use a multi-stage prompt encoding process:

1. Semantic parsing that breaks prompts into scene graph representations

2. Priority weighting that identifies primary subjects versus contextual elements

3. Temporal binding that maintains subject identity across the sequence

4. Negative space handling that properly interprets spatial relationships (“behind,” “above,” “between”)

This structured approach contrasts with SeaDance’s more holistic prompt encoding, which can lose track of specific elements in complex instructions.

Compositional Generation

HappyHorse demonstrates strong compositional understanding, the ability to combine novel concepts not seen together in training data. A prompt like “a steampunk octopus playing chess against a holographic AI” produces coherent results because the model understands “steampunk,” “octopus,” “chess,” and “holographic” as separable concepts that can be logically combined.

SeaDance tends toward mode collapse in such scenarios, defaulting to more common training data patterns rather than truly novel compositions.

Generation Speed and Computational Efficiency

Time-to-First-Frame Metrics

HappyHorse generates 4-second clips (96 frames at 24fps) in an average of 47 seconds on an H100 GPU, compared to SeaDance’s 73 seconds, a 35% speed advantage. More impressively, HappyHorse’s progressive generation allows preview of early-stage results at 12 seconds, enabling rapid iteration.

This speed advantage likely derives from the adaptive scheduler, by dynamically allocating compute only where needed, the model avoids unnecessary processing cycles.

Memory Efficiency

HappyHorse’s VRAM requirements are surprisingly modest given its quality output. 4K resolution generation requires 18GB VRAM compared to SeaDance’s 26GB for equivalent output. This efficiency makes HappyHorse accessible on consumer hardware (RTX 4090, 24GB) where SeaDance demands enterprise GPUs.

The memory efficiency appears related to latent compression strategies. HappyHorse uses a 16x compression factor in its VAE (Variational Autoencoder) versus SeaDance’s 8x compression, working in a more compact latent space without sacrificing reconstruction quality.

Why This Represents a Paradigm Shift in Video AI

From Frame Generation to Scene Understanding

The fundamental breakthrough HappyHorse represents is the shift from “generating video frames” to “understanding and simulating scenes.” Earlier models, including SeaDance, essentially produced sophisticated next-frame predictions based on statistical patterns in training data. HappyHorse appears to build internal scene representations, understanding spatial relationships, physics constraints, and causal relationships between objects.

This explains why HappyHorse handles novel scenarios more gracefully. It’s not just matching training data patterns; it’s applying learned scene understanding to new situations.

Emergent 3D Understanding

Despite being trained purely on 2D video data, HappyHorse exhibits emergent 3D spatial reasoning. Camera movements produce correct parallax effects, foreground objects move faster than background elements at physically plausible rates. Occlusion handling is geometrically consistent, objects properly disappear and reappear as camera angles change.

This suggests the model has learned an implicit 3D world representation during training, similar to how neural radiance fields (NeRFs) reconstruct 3D scenes from 2D images. SeaDance lacks this emergent capability, producing camera movements that sometimes violate 3D geometry.

Reduced Artifacts and Hallucinations

HappyHorse’s artifact rate is dramatically lower, independent testing shows temporal artifacts (warping, morphing, flickering) occur in just 3.2% of generated frames versus SeaDance’s 11.7%. When artifacts do appear, they’re typically subtle and confined to edge regions rather than affecting primary subjects.

The hallucination rate for unexpected elements (objects appearing/disappearing, impossible physics) is similarly reduced: 1.8% of sequences versus SeaDance’s 7.3%. For professional creators, this reliability difference is production-critical, fewer generations end up unusable.

Practical Implications for AI Video Creators

Workflow Integration

For creators currently using SeaDance in production pipelines, HappyHorse offers immediate advantages:

Reduced iteration cycles: Higher prompt adherence means achieving desired results in 60% fewer generations, dramatically accelerating creative iteration.

Extended sequence capability: The ability to maintain coherence across 480+ frames enables generating 20-second clips as single sequences rather than stitching multiple 4-second segments.

Better camera control: HappyHorse’s spatial understanding makes it more responsive to camera movement instructions (“slow dolly zoom,” “crane up,” “tracking shot”) with cinematically appropriate results.

Creative Possibilities

HappyHorse’s capabilities enable previously impractical creative approaches:

Complex physics scenarios: Realistic water, fire, smoke, and cloth simulation that was hit-or-miss with SeaDance becomes reliably achievable.

Multi-character scenes: HappyHorse maintains distinct character identities in crowded scenes where SeaDance would merge or swap character features.

Narrative continuity: Extended coherence enables short narrative sequences where characters perform multi-step actions (walking through a door, picking up an object, reacting to an event) within a single generation.

Learning Curve Considerations

Paradoxically, HappyHorse may have a steeper initial learning curve than SeaDance. Its superior prompt adherence means you must be more precise with instructions, there’s less “auto-correction” where the model intuits your intent despite vague prompting.

Effective HappyHorse prompting requires:

Explicit spatial relationships: Specify exactly where objects should be positioned relative to each other.

Motion direction clarity: State movement direction and speed explicitly rather than assuming the model will choose appropriately.

Style consistency: HappyHorse takes style descriptors very literally, so mixing incompatible styles (“photorealistic cyberpunk watercolor”) produces exactly what you ask for, potentially incoherent results.

Cost-Benefit Analysis

For professional creators, HappyHorse’s efficiency gains translate directly to cost savings:

- 35% faster generation means 35% lower compute costs per clip

- 60% fewer iterations to achieve desired results reduces effective cost by additional 40%

- Combined effect: approximately 62% cost reduction per finalized clip

For hobbyists on consumer hardware, HappyHorse’s memory efficiency is the game-changer, enabling 4K generation on 24GB GPUs where SeaDance required 48GB+ enterprise hardware.

The Competitive Landscape Going Forward

HappyHorse’s emergence raises critical questions about the video AI competitive landscape:

Who developed HappyHorse? The model’s mysterious origin, no corporate backing, no academic papers, minimal documentation, suggests either a stealth startup or independent researchers who’ve made breakthrough discoveries.

Can SeaDance respond? SeaDance’s developers will undoubtedly study HappyHorse’s capabilities and attempt to incorporate similar techniques. However, if HappyHorse’s advantages stem from fundamental architectural differences, catching up may require rebuilding from scratch rather than incremental updates.

What comes next? If HappyHorse represents the new baseline, where do video AI capabilities go from here? Likely directions include extended temporal coherence (minute-long sequences), interactive control (steering generation mid-process), and true 3D scene control.

Conclusion: A New Era in Video AI

HappyHorse 1.0’s rapid displacement of SeaDance 2.0 signals that video AI has entered a new competitive phase. The gap between model generations is widening, improvements are no longer incremental percentages but categorical capability leaps.

For AI video creators, this creates both opportunity and challenge. The opportunity: access to dramatically superior tools that enable creative visions previously impractical. The challenge: keeping pace with rapidly evolving capabilities requires continuous learning and workflow adaptation.

The most important lesson from HappyHorse’s emergence may be this: in generative AI, today’s industry leader can become tomorrow’s legacy system in a matter of weeks. The tools that feel cutting-edge today are evolutionary stepping stones toward capabilities we’re only beginning to imagine.

For creators willing to embrace this rapid evolution, HappyHorse represents not just a better tool, but a glimpse of video AI’s near-term trajectory, toward models that don’t just generate frames, but truly understand and simulate the visual world.

Frequently Asked Questions

Q: What are the main technical differences between HappyHorse 1.0 and SeaDance 2.0?

A: HappyHorse uses a hybrid diffusion transformer architecture with simultaneous spatial-temporal processing, while SeaDance relies on sequential processing with a U-Net backbone. HappyHorse also implements adaptive step scheduling, optical flow integration, and achieves superior frame coherence (94.7 vs 89.2), motion realism (8.9/10 vs 7.4/10), and prompt adherence (91.3% vs 78.6%).

Q: Can HappyHorse run on consumer GPUs like the RTX 4090?

A: Yes. HappyHorse requires only 18GB VRAM for 4K generation compared to SeaDance’s 26GB requirement, making it accessible on consumer hardware like the RTX 4090 (24GB). This is due to HappyHorse’s 16x latent compression versus SeaDance’s 8x compression.

Q: How much faster is HappyHorse compared to SeaDance 2.0?

A: HappyHorse generates 4-second clips (96 frames at 24fps) in approximately 47 seconds on an H100 GPU versus SeaDance’s 73 seconds, a 35% speed advantage. Combined with 60% fewer iterations needed due to better prompt adherence, total time-to-final-result is reduced by approximately 62%.

Q: What is temporal consistency and why does HappyHorse excel at it?

A: Temporal consistency refers to maintaining object identity, position, and appearance coherently across video frames. HappyHorse excels through optical flow integration, multi-scale temporal attention, and 3D transformer architecture that processes spatial and temporal dimensions simultaneously. It maintains character identity across 480 frames versus SeaDance’s 300-frame limit.

Q: What is seed parity and why does it matter for video creators?

A: Seed parity refers to the ability to reproduce identical results using the same seed value and prompt. HappyHorse achieves 99.2% seed consistency versus SeaDance’s 87%, which is critical for iterative workflows where creators need to make small prompt adjustments while maintaining overall scene composition and character consistency.