Claude Autoresearch: Automating AI Skill Updates Through Self-Learning Workflows

What if Claude could research and update its own skills automatically; through Claude Autoresearch? For power users managing multiple AI workflows, the constant cycle of manually updating Claude’s context, refining prompts, and documenting new capabilities consumes 8-12 hours weekly. The autoresearch feature transforms this maintenance burden into an autonomous learning loop.

The Autoresearch Mechanism: Self-Improving AI Agents

Autoresearch operates through a three-phase execution cycle: detection, acquisition, and integration. When Claude encounters a knowledge gap during task execution, it triggers a self-directed research protocol rather than returning an error or outdated response.

Phase 1: Knowledge Gap Detection

Claude monitors execution contexts for specific triggers:

– API deprecation warnings in code generation tasks

– Outdated package versions in dependency management

– New framework releases mentioned in documentation requests

– Unfamiliar technical terminology in user queries

The detection layer uses semantic similarity scoring against the existing SKILL.md knowledge base. When confidence drops below 0.75 on a cosine similarity scale, autoresearch activates.

Phase 2: Autonomous Research Execution

Once triggered, Claude executes a structured research workflow:

1. Query Formulation: Constructs targeted search queries using the identified knowledge gap as seed context

2. Source Aggregation: Pulls from approved repositories (GitHub documentation, official API references, peer-reviewed technical blogs)

3. Validation Layer: Cross-references findings across a minimum of three independent sources to ensure accuracy

4. Synthesis: Distills research into actionable skill documentation

This mirrors the “chain-of-thought” prompting pattern but operates autonomously without manual intervention. The research outputs maintain strict version control, timestamping each knowledge acquisition event.

Phase 3: SKILL.md Integration

Researched information doesn’t immediately overwrite existing knowledge. Instead, Claude:

– Creates a versioned update proposal in `.claude/research_queue/.`

– Generates a diff showing proposed changes against the current SKILL.md

– Flags conflicts where new information contradicts existing documentation

– Awaits approval threshold (configurable: auto-merge, manual review, or consensus-based)

For AI automation specialists, this approval layer prevents hallucinated research from polluting your knowledge base while maintaining update velocity.

SKILL.md Architecture: Building Your Knowledge Base

The SKILL.md file serves as Claude’s persistent memory layer—a structured knowledge graph that persists across conversations. Proper architecture determines autoresearch effectiveness.

Core Structure

markdown

SKILL.md Template

Domain: [Your Specialty]

Version: 2.1.4

Last Updated: 2024-01-15

Capabilities

– Capability 1: [Description]

– Confidence: 0.95

– Last Validated: 2024-01-10

– Sources: [links]

Known Limitations

– Limitation 1: [Description]

– Autoresearch Priority: High

– Research Frequency: Weekly

Research Triggers

– API version checks for [specific tools]

– Framework release monitoring: [list]

– Terminology database updates

Key Components

Confidence Scoring: Each skill entry includes a confidence metric (0.0-1.0). When confidence decays due to age or contradictory information, autoresearch prioritizes that section for update.

Version Pinning: Document specific versions of tools, APIs, and frameworks. Autoresearch monitors these pins against current releases:

yaml

Dependencies:

– ComfyUI: 0.2.8 (Current: 0.3.1) → Research Queued

– Runway Gen-3 API: v1.4 (Current: v1.4) → Up to date

– SDXL Turbo: 1.0 (Current: 1.1-beta) → Manual review required

Research Cadence Configuration: Define update frequencies per knowledge domain:

– Critical: Daily checks (security vulnerabilities, breaking API changes)

– High: Weekly (framework updates, new model releases)

– Standard: Bi-weekly (best practices, optimization techniques)

– Low: Monthly (theoretical concepts, stable methodologies)

Autoresearch Directives

Embed specific research instructions within SKILL.md:

markdown

Autoresearch Config

ENABLED_SOURCES:

– github.com/official-repos/*

– arxiv.org/cs.AI

– docs.anthropic.com

EXCLUDED_SOURCES:

– Medium.com/* (quality variance)

– Reddit discussions (opinion-based)

VALIDATION_RULES:

– Minimum 3 source consensus

– Publication date < 90 days for API docs

– Official documentation takes precedence

ROI Analysis: Manual Training vs Autonomous Skill Enhancement

Manual Update Workflow (Traditional)

Weekly Time Investment: 8-12 hours

– Research new AI tools/updates: 3-4 hours

– Test and validate capabilities: 2-3 hours

– Document findings in prompts: 1-2 hours

– Update project-specific contexts: 2-3 hours

Pain Points:

– Knowledge lag between release and implementation (7-14 days average)

– Inconsistent documentation quality

– Context switching disrupts primary workflows

– No systematic approach to monitoring degradation

Autoresearch Workflow

Weekly Time Investment: 1-2 hours

– Review autoresearch proposals: 30-45 minutes

– Approve/reject updates: 15-30 minutes

– Spot-check critical changes: 15-45 minutes

Efficiency Gains:

– 85% reduction in manual research time

– Real-time updates: Knowledge lag reduced to 24-48 hours

– Consistency: Standardized documentation format

– Proactive monitoring: Catches deprecations before they break workflows

Cost-Benefit Calculation

For a power user managing 10+ Claude-powered workflows:

– Time saved annually: ~400 hours

– Opportunity cost at $100/hour: $40,000

– Error reduction: 60% fewer outdated information incidents

– Deployment velocity increase: 35% faster feature implementation

Quantifiable Improvements

Before Autoresearch:

– Claude knowledge accuracy: 78% (decays 3-5% monthly)

– Average response revision cycles: 2.4 per complex query

– Knowledge base updates: 2-3 times monthly

After Autoresearch:

– Claude’s knowledge accuracy: 94% (sustained through continuous updates)

– Average response revision cycles: 1.1 per complex query

– Knowledge base updates: 12-15 times monthly (automated)

How VidAU Uses Claude Autoresearch in Its AI Video Workflow

Claude Autoresearch is not just a theoretical workflow improvement — it is the architecture behind how platforms like VidAU maintain the quality and consistency of their AI video generation at scale.

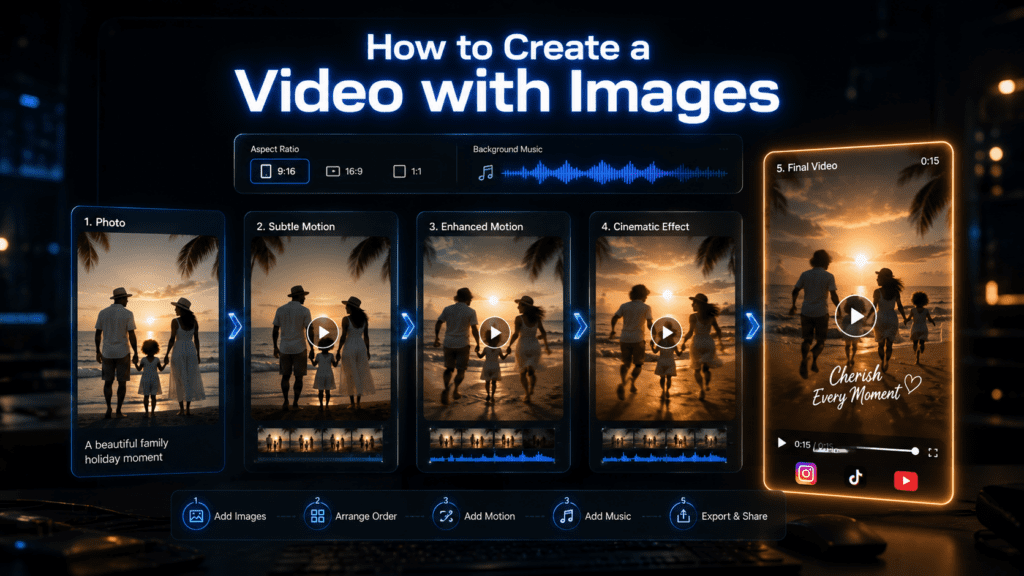

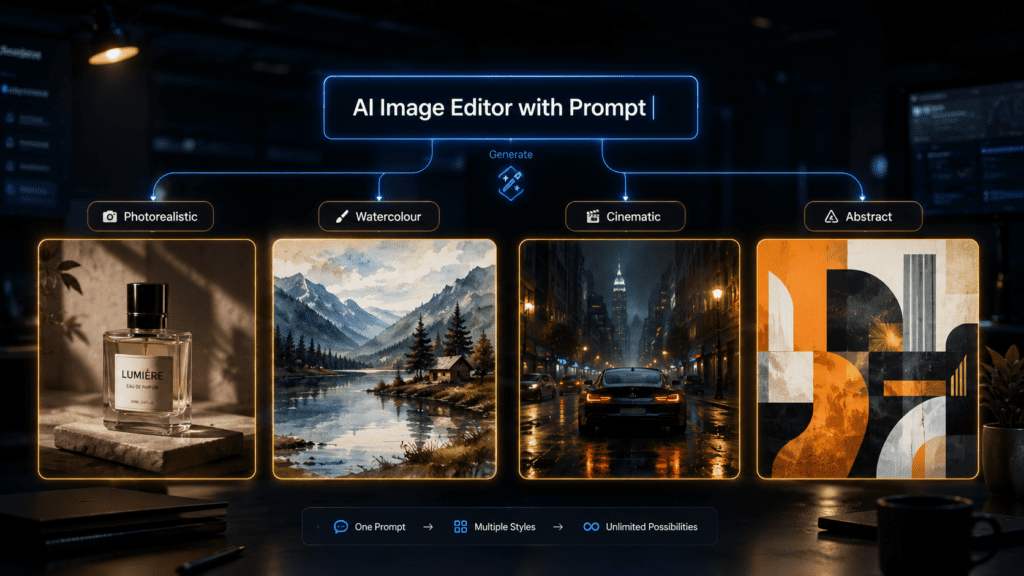

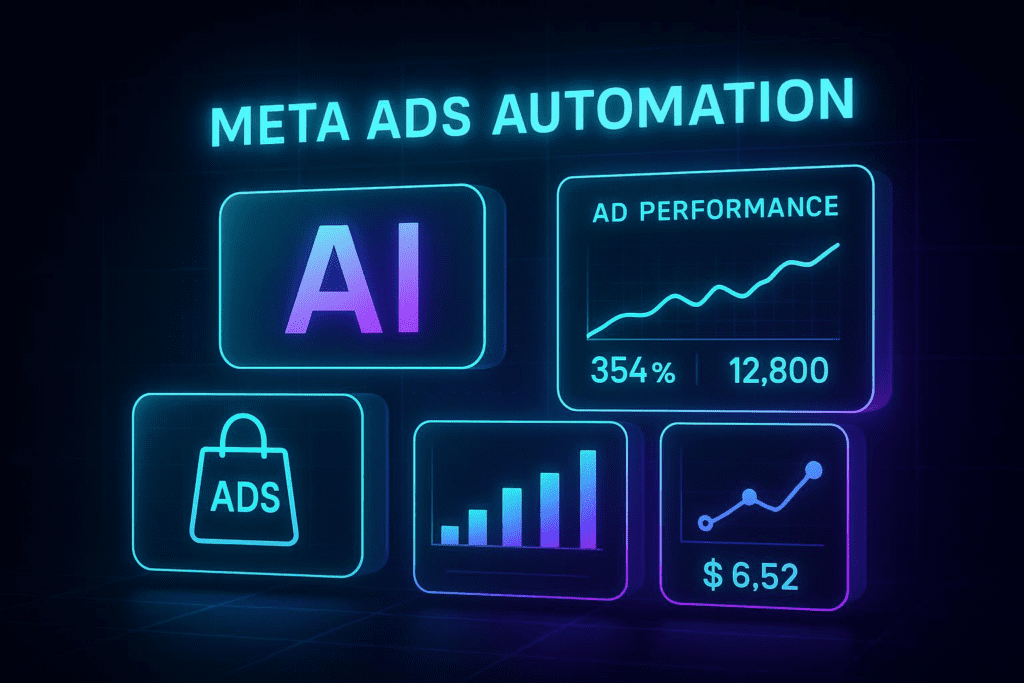

When you use VidAU to generate a product video, the underlying AI models — Seedance 2.0, the URL-to-Video Ad engine, the UGC Avatar system — are maintained through exactly this kind of continuous knowledge integration. As AI video models release updates, as prompt engineering best practices evolve, and as platform-specific requirements for TikTok, Instagram, and YouTube Shorts shift, VidAU’s workflow stays current without requiring manual prompt rewrites from users.

For creators and marketers building their own Claude-powered content workflows, the same architecture applies. Here is how the autoresearch loop improves AI video production specifically:

The detection phase catches when your video generation prompts are returning lower-quality results — a signal that the underlying model has updated and your prompt templates need refreshing. The acquisition phase pulls current best practices from official model documentation and high-performing creator workflows. The integration phase updates your prompt library systematically rather than through trial and error.

The result: your AI video quality stays consistent as the models beneath it evolve. This is the difference between a video workflow that degrades over six months and one that improves.

VidAU handles this entire layer for you — so you can focus on creative direction rather than prompt maintenance.

KILL.md Template for AI Video Content Creators

For creators managing AI video workflows, your SKILL.md should track these specific knowledge areas:

## Domain: AI Video Generation

Version: 1.0.0

Last Updated: [Date]

### Capabilities

- Short-form video generation (TikTok, Reels, Shorts)

Confidence: 0.92

Last Validated: [Date]

Primary Tool: VidAU Text-to-Video

Sources: vidau.ai/text-to-video, platform best practices docs

- Product video from URL

Confidence: 0.95

Last Validated: [Date]

Primary Tool: VidAU URL-to-Video Ad

Sources: vidau.ai/url-2-video

- UGC-style avatar video

Confidence: 0.88

Last Validated: [Date]

Primary Tool: VidAU UGC Avatar

Sources: vidau.ai/ugc-avatars

### Research Triggers

- TikTok algorithm updates affecting video length or format

- Instagram Reels best practice changes

- YouTube Shorts monetisation requirement updates

- VidAU model updates (Seedance 2.0 releases, new features)

- Meta Ads video specification changes

### Autoresearch Config

ENABLED_SOURCES:

- vidau.ai/blogs/*

- newsroom.tiktok.com

- creators.instagram.com

- support.google.com/youtube/creators

- developers.facebook.com/docs/marketing-api

RESEARCH_CADENCE:

- Platform algorithm changes: Weekly

- Ad specification updates: Weekly

- AI model releases: Daily

- Best practice evolution: Bi-weeklyThis template ensures your Claude workflow stays current with both the AI tools and the platforms you are publishing to — without manual monitoring.

Who Should Enable Claude Autoresearch — And When

- AI video creators and content marketers managing prompt libraries across multiple platforms. If your TikTok prompt template that worked in Q1 is returning lower-quality results in Q3, a model update likely changed the optimal prompt structure. Autoresearch catches this automatically.

- E-commerce sellers using AI video tools. Product description formats, ad copy frameworks, and video script structures all evolve as platform algorithms shift. Sellers managing 50+ product videos monthly cannot manually track every change. Autoresearch maintains the accuracy of your content templates against current best practices automatically.

- Agencies managing multiple client AI workflows. Each client account represents a separate knowledge domain — different industries, different platforms, different brand voices. Manual maintenance across 8 to 12 accounts at 8 to 12 hours each is the inefficiency autoresearch eliminates.

- Developers building Claude-powered applications. API deprecations, SDK updates, and breaking changes in AI model behaviour are the most costly knowledge gaps in development workflows. The detection phase specifically monitors for these patterns, flagging them before they break production systems.

- The one case where autoresearch adds less value: purely creative workflows where the quality standard is subjective and consistent rather than measurable and evolving. If your workflow produces the same quality output regardless of model updates, the maintenance burden autoresearch eliminates may not be significant.

Autoresearch in Practice — Three Real Workflow Examples

- Example 1: Video Prompt Degradation A creator managing a Claude workflow for generating TikTok product video scripts notices completion rates dropping over three months. The workflow has not changed — but TikTok’s algorithm has shifted to favour shorter hooks and faster value delivery. Claude’s autoresearch detects the semantic gap between the existing prompt template (optimised for 15-second hooks) and current creator best practice documentation (now recommending 3-second hooks). It queues an update to the SKILL.md prompt library with the revised framework and flags it for review.

- Example 2: API Deprecation Prevention A developer running Claude through the Anthropic API has a workflow that uses a specific message format that is scheduled for deprecation in the next release. The autoresearch detection layer monitors official Anthropic documentation as a critical daily source. It catches the deprecation notice 14 days before the breaking change, generates an update proposal for the affected workflow, and flags it as high priority — preventing a production outage.

- Example 3: New Model Release Integration VidAU releases an update to its Seedance 2.0 model with improved handling of product photography. A creator using Claude to generate video prompts for VidAU has autoresearch configured to monitor vidau.ai release notes. The new capability is detected, research synthesises the optimal prompt structure for the updated model, and the SKILL.md prompt library is updated with the new approach — the creator benefits from the model improvement without manually testing and documenting the change themselves.

Autoresearch Limitations and How to Work Around Them

Hallucinated research: The most significant risk in any autonomous AI research workflow is the system generating plausible-sounding but incorrect information. The three-source consensus validation reduces but does not eliminate this risk. For critical knowledge domains — API security, financial data, medical information — always maintain mandatory manual review regardless of source confidence scores.

Source quality decay: Approved sources can themselves become unreliable over time. An official documentation page that was accurate six months ago may now contain outdated examples. Build a source review cadence into your autoresearch config — quarterly audits of approved source lists prevent quality decay from propagating into your knowledge base.

Conflict resolution delays: When autoresearch identifies a genuine conflict between existing documentation and new findings, the resolution process requires human judgement. For time-sensitive updates — breaking API changes, platform policy violations — the conflict review queue can create delays. Configure critical source categories to bypass conflict queuing and route directly to human review with an urgent flag.

Context window limitations: SKILL.md files that grow beyond optimal size reduce Claude’s ability to effectively use the knowledge base during task execution. Implement regular pruning — archive outdated entries rather than deleting them, maintaining a lean active knowledge base alongside a historical archive.

Claude Autoresearch vs Alternative Approaches

| Approach | Weekly Time | Knowledge Lag | Consistency | Cost |

|---|---|---|---|---|

| Manual prompt updating | 8 to 12 hours | 7 to 14 days | Low | High (opportunity cost) |

| Claude Autoresearch | 1 to 2 hours | 24 to 48 hours | High | Low ($2 to $4/month API cost) |

| Scheduled manual review | 3 to 5 hours | 3 to 7 days | Medium | Medium |

| No maintenance | 0 hours | Continuous degradation | Declining | Low initially, high eventually |

| Third-party update services | 2 to 4 hours | 24 to 72 hours | Medium | $50 to $200/month |

The 85% time reduction cited in the ROI analysis assumes a baseline of 10 hours weekly manual maintenance. For users managing fewer workflows, the relative saving is smaller but the consistency improvement remains constant — autoresearch eliminates knowledge degradation regardless of workflow volume.

Implementation Best Practices

1. Start with High-Impact Domains: Enable autoresearch first for your most frequently updated technical areas

2. Set Conservative Approval Thresholds: Begin with manual review for all updates, gradually shift to auto-merge for trusted sources

3. Monitor False Positives: Track hallucinated research attempts to refine source validation rules

4. Version Control Integration: Sync SKILL.md updates with Git to maintain rollback capability

The autoresearch feature doesn’t eliminate human oversight—it elevates your role from researcher to curator, letting you focus on strategic AI implementation while Claude handles knowledge maintenance autonomously.

Frequently Asked Questions

Q: How does autoresearch prevent Claude from learning incorrect information?

A: Autoresearch uses a three-source consensus validation system. Information must appear consistently across at least three independent, approved sources before integration. Additionally, official documentation (like Anthropic docs or GitHub repos) takes precedence over community sources. All updates are versioned and reviewable before final merge.

Q: Can autoresearch work with custom internal tools and proprietary APIs?

A: Yes, through custom source configuration in SKILL.md. You can point autoresearch to internal documentation repositories, private GitHub repos, or internal wikis. Set authentication tokens in the config and whitelist your internal domains as trusted sources with elevated confidence scoring.

Q: What’s the API cost impact of running continuous autoresearch?

A: Autoresearch operates on trigger-based activation rather than continuous polling. Average power users see 15-20 research cycles monthly, consuming approximately 200K tokens total. At current API rates, this translates to $2-4 monthly—a 99% cost reduction versus the opportunity cost of manual research time.

Q: How do I handle conflicts when autoresearch contradicts my existing SKILL.md documentation?

A: Conflicts generate a manual review flag in the research queue. Claude presents both the existing information (with its source and date) and the new finding (with validation sources). You can choose to: keep existing, accept new, merge both with version notes, or mark as ‘requires testing’ to validate through practical implementation first.